One of the more challenging tasks in political research is to produce reliable information on how political parties compare with one another on key issues like their approach to the economy or immigration. As Didier Ruedin writes, some researchers have sought to perform this task using automated methods that classify a party’s approach to an issue by scanning the content of their manifesto. However, he illustrates that while such methods can be efficient in terms of the time taken to carry out the research, they can often produce misleading conclusions.

One of the more challenging tasks in political research is to produce reliable information on how political parties compare with one another on key issues like their approach to the economy or immigration. As Didier Ruedin writes, some researchers have sought to perform this task using automated methods that classify a party’s approach to an issue by scanning the content of their manifesto. However, he illustrates that while such methods can be efficient in terms of the time taken to carry out the research, they can often produce misleading conclusions.

Immigration is increasingly salient in European politics, but where do different parties stand on the issue? Knowing the positions of parties is important in many questions in political science, including problems of political representation and party competition. Researchers have come up with different approaches, and there is no agreement over which one we should use.

One method is to automatically code the positions of parties on an issue using their party manifestos. Automatic coding of party manifestos may be tempting, not least because the computer does most of the work. But there is a danger that this approach, though seemingly more efficient, can produce misleading conclusions. In a recent study, co-authored with Laura Morales, I have conducted a systematic assessment of various methods for positioning political parties on immigration. This analysis shows that automatic approaches may not be valid, but that different methods of coding manifestos manually tend to agree with one another and correlate highly with positions from expert surveys.

In expert surveys, political scientists working on party positions are asked to categorise and position political parties on a range of issues, such as where their views sit on a left/right scale, or their stance on social issues or immigration. Such surveys are authoritative, but researchers might be interested in parties or time periods that are not covered by expert surveys. In these cases, the consensus in the academic literature is that party manifestos are an excellent source to turn to, but there is no agreement on how these documents should be analysed to obtain party positions.

Efforts to place party positions on left/right and authoritarian/libertarian dimensions have tended to attract the greatest attention in the literature, but often specific issue domains are of interest, like party positions on immigration. We only need to think of the importance of immigration in the recent Brexit referendum in the UK, or how immigration played a role in Donald Trump’s election campaign in the United States. Positions on immigration can affect key political decisions. And only by measuring these positions can we find out if and when they really cut across the common left/right dimension.

In our study, we assessed the validity of different methods by systematically applying them to the manifestos of the main parties in elections in Austria, Belgium, France, Ireland, the Netherlands, Spain, and Switzerland between 1993 and 2013. This yielded 283 party manifestos, each of which was coded manually with a conventional sentence-by-sentence codebook, using a ‘checklist’ approach to code the entire manifesto at once, and with commonly used automated approaches: Wordscores, Wordfish, and a dictionary of keywords with scores attached.

Additionally, pooled estimates from expert surveys were used as an approach that does not rely on party manifestos. Positions from the Comparative Manifestos Project (CMP) were also considered. While the data from the CMP is often used to position parties on the immigration issue, a closer look at the codebook reveals that the codes before the 2014 codebook do not really measure immigration, but rather bundled the topic in with other issues. Researchers using this data thus rely on untested assumptions that the measure is ‘good enough’.

To make things more challenging, immigration has not always been salient, and some (non-random) parties feel like they have much more to say about immigration than others. This means using manifestos to position parties on immigration may also be more difficult in principle. This challenge, however, is also a major advantage, because the approaches that work for a difficult domain are likely to also work for easier domains to measure.

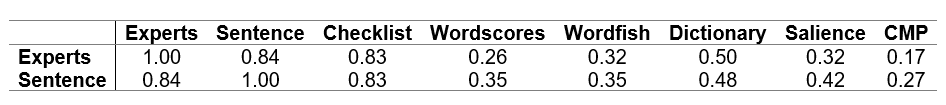

Overall, there are high levels of consistency between expert positioning, manual sentence-by-sentence coding, and manual checklist coding. Positions from these methods tend to agree in broad terms. The table below shows the corresponding Spearman rank correlations. On the one hand, there are poor or inconsistent results with the CMP measure, Wordscores, Wordfish, and the dictionary approach. For some countries and elections, automated approaches come close to the expert and manual estimates, but they have unexpected outliers. As a fun exercise to better understand how ‘bad’ some correlations are, we can also consider how much of their manifesto parties dedicate to immigration: considering salience without looking at content – assuming that the more parties write about immigration, the more negative they tend to be.

Table: Spearman ρ correlations between estimates from different methods for measuring a party’s approach to immigration

Note: Each label represents a different method for measuring a party’s approach to immigration. The numbers for each cell in the table show the extent to which each method correlates with expert positioning of parties and sentence-by-sentence coding of party positions, where 1.00 indicates perfect correlation, and 0 indicates no correlation at all. See the author’s longer study for more details.

The table makes it clear that (assuming that the Chapel Hill experts know their thing, of course) there is really no excuse for using the old CMP data. The correlation is lower than just looking at how much parties write about immigration. That said, with the new subcodes in the most recent codebook, things will probably improve for the CMP/MARPOR positions – for those only interested in recent elections (where experts data are also available). By contrast, an often-neglected method – manual coding using checklists, where the manifestos are coded as a whole – offers resource efficiency with no loss in validity or reliability.

What do we take away from this? In terms of validity, it appears manual approaches remain unmatched by common automatic approaches. Given that they rely on a stable relationship between words and positions, automatic changes may struggle with rapid changes in the debate and constant framing and reframing processes of emerging issues like immigration. What the best method is, however, this comparison of different methods cannot say: sometimes a single mistake would be disastrous for a project, sometimes we can live with getting it right ‘on average’, hoping that the incorrect positions are relatively unsystematic (a big assumption of course).

Checklists might be a decent compromise: Coding manifestos overall is much quicker than coding each sentence (typically trained coders needed between 10% and 20% of the time compared to sentence-by-sentence coding), yet seems to provide reliable positions. Crowdsourcing could perhaps make the checklist approach even more cost-effective – leaving ethical questions aside – but the task may be too complex and the texts too long to work in such an environment.

Please read our comments policy before commenting.

Note: This article gives the views of the author, not the position of EUROPP – European Politics and Policy or the London School of Economics. For more information, see the author’s accompanying journal article in Party Politics. Featured image credit: Jason Davies.

_________________________________

Didier Ruedin – University of Neuchâtel / University of the Witwatersrand

Didier Ruedin – University of Neuchâtel / University of the Witwatersrand

Didier Ruedin is a senior researcher and lecturer at the University of Neuchâtel and at the University of the Witwatersrand.