Government efforts at assessing university research via the REF involve universities and hundred of senior academics in perpetuating a mythical, bureaucratic form of ‘peer review’. Inherently these exercises only produce ‘evidence’ that has been fatally structured from the outset by bureaucratic rules and university games-playing. Patrick Dunleavy argues that in the digital era, this mountain of special form-filling and bogus ‘reviewing by committee’ has become completely unnecessary.

Government efforts at assessing university research via the REF involve universities and hundred of senior academics in perpetuating a mythical, bureaucratic form of ‘peer review’. Inherently these exercises only produce ‘evidence’ that has been fatally structured from the outset by bureaucratic rules and university games-playing. Patrick Dunleavy argues that in the digital era, this mountain of special form-filling and bogus ‘reviewing by committee’ has become completely unnecessary.

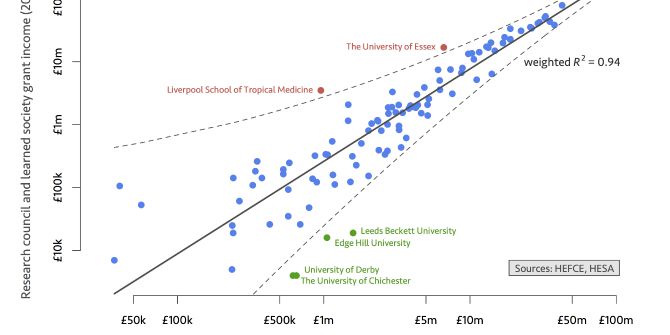

Instead, every UK academic should simply file a report with their university of all their own publications during the REF assessment period and the citations they have attracted, using the completely free and easy-to-use Google Scholar-Harzing system. At a fraction of the cost, time and effort that REF entails, we would generate comprehensive and high quality information about every university’s research performance, and a unique picture of UK academia’s influence across the world.

During the last Research Assessment Exercise (RAE) in 2008, the Higher Education Funding Council for England (HEFCE) solemnly assembled 50,000 ‘research outputs’ (i.e. books and research articles) in a vast warehouse in Bristol, where the weight of paper was so great that it could only be moved about by an army of forklift trucks. The purpose of this massive, bean-counting exercise was so that every one of these ‘outputs’ could be individually ‘assessed’ in a ‘peer review’ process involving hundreds of academics across more than two dozen panels.

What this meant in practice was that at least one person from each committee at least ‘eyeballed’ each book or journal article, and then subjectively arrived at some judgement of its worth, that was perhaps subsequently ‘moderated’ in some fashion by the rest of the panel. (Quite how either of these stages works remained unclear, of course). What useful information was generated by the whole RAE exercise, and the somewhat similar Excellence in Research for Australia (ERA)? The answer is hardly anything of much worth – the whole process used is obscure, ‘closed book’, undocumented and cannot be challenged. The judgements produced are also so deformed from the outset by the rules of the bureaucratic game that produced it that they have no external value or legitimacy.

To take the simplest instance, the RAE asked for four ‘research outputs’ to be submitted – even though across vast swathes of the university sector this might not be an appropriate way of assessing anything. The limit inherently discriminated against the thousands of academics with far more work to submit than this, those with one or two stellar pieces of work that really matters, and those doing applied research, where influence is often achieved by sequences of many shorter research-informed pieces. Similarly, the disciplinary structure of panels automatically privileged the older (‘dead heart’) areas of every discipline, at the expense of the innovative fields of research that lie across disciplinary frontiers. And the definition of a ‘research output’ not only automatically condemned almost all applied work to low scores, but used deformed ‘evidence’, like the average ‘impact’ of a journal as a proxy value to be attached for every article published in it (whether good or bad). For books, the ‘reputation’ of a publisher was apparently substituted even more crudely for all their outputs.

Does this matter to HEFCE, or the major university elites who have consistently supported its procedures? It would seem not. The primary function of these assessments is just to give HEFCE a fig leaf for allocating research monies between universities without too much protest from the losers. A second key purpose is to ‘discipline’ universities to conform exactly to HEFCE’s and ministers’ limited view of ‘research’. Third, it gives Department of Education civil servants a pointless, paper mountain audit trail with which to justify to ministers and the Treasury where all the research support money has gone.

Within universities themselves, who uses RAE information for any serious academic (as opposed to bureaucratic) purpose? The results just give the winning universities and departments a couple of extra bragging rights to include amongst all the other (similarly tendentious) information on their university’s corporate website. In other words, hundred of millions of pounds of public money are spent on constructing a giant fairytale of ‘peer review’, one that involves thousands of hours of committee work by busy academics and university administrators, who should all be far more gainfully employed.

All the indications are that academic impact parts of the next Research Excellence Framework (REF) will be exactly the same as RAE – a hugely expensive and costly bureaucratic gaming exercise, where some panel members have already been told they will have to eyeball 700 pieces of work each. Yet it doesn’t have to be like this at all. It is not too late for government and the university elites to wake up and realize that in the current digital era, conducting special and distorting regulatory exercises like the REF or ERA is completely unnecessary. In Google Scholar we now have all the tools to do accurate research assessment by charting how many other academics worldwide are citing every article or book individually. And this information is just as good for the arts, humanities and social sciences as it is for the physical sciences. Scholar is also automatically up to date and far more inclusive through covering books and working papers than the pre-internet bibliometric databases.

One of the key REF tasks for some panels still involves ranking journals in terms of journal impact scores. Yet this simply ignores all the instantly available information about the research impact of every individual article and book in order to substitute instead the average citations of a whole set of papers. By definition this average value is wrong for almost all of the individual papers involved. In other words the REF is choosing to substitute misinformation for real, fine-grained digital information.

Digital-era research assessment

How could an alternative, more rational and modern process of research assessment work? We could conduct a full academic census of the UK’s research outputs and their citations. This would generate completely genuine, useful and novel information that would be of immediate value to every academic, every university and every minister sitting around the Cabinet table. It would operate like this:

- Every academic and researcher would submit to their university details of all their publications in the assessment period, and of the numbers of citations recorded for them in the Google Scholar system. The ‘Publish or Perish’ software (invented by Professor Anne-Wil Harzing of Melbourne University) provides an exceptionally easy to use software front-end that allows academics to do this. Using the Harzing version of Scholar, an academic with a reasonably distinctive name should be able to compile this report in less than half an hour. The software also supplies a whole suite of statistics that fully allows for academics’ career length and other factors, so as to give a fair picture.

- Every university would have the responsibility of assembling and checking the Harzing-Google record for their staff. They would also investigate any cases where queries arise over the data – e.g. cross-checking with the less inclusive Web of Knowledge or Scopus systems in any difficult cases. HEFCE could provide either common rules, or better still common software, to ensure an open-book process, where everyone knows the rules

- Every university would then submit to HEFCE online their full set of staff publication records and citations, with each submission being available on the web – for open-source checking by all other universities and academics, to prevent any suspicion of games-playing or cheating.

- HEFCE would employ a small team of expert analysts who would collate and standardize all the data, so that meaningful averages are available for every discipline, and interdisciplinary areas, and appropriate controls apply for different citation rates across disciplines, age-groups etc.. The analysts would also produce university-specific and department-specific draft reports that are published in ‘open book’ formats.

- Subject panels would meet no more than two or three times, to consider the checked citations scores and numbers of publications information, to hear any special case pleas or issues with the draft reports, and to do just the essential bureaucratic task of impartially allocating departments into research categories on the basis of the information presented – and only that information.

Apart from saving massively on everyone’s time, this approach would produce soundly-based census information on research performance. Ministers and officials would know with far more accuracy than ever before what English research funding produces in terms of academic outputs. Universities (and every academic department within them) would be able to base their decision-making about academic priorities on genuine evidence of what work is being cited and having influence, and what is not.

And academics themselves would find the whole exercise enormously time-saving and stress-saving. Indeed, using the Google-Harzing system can be a liberating and genuinely useful experience for any serious scholar. All of us need every help we can get to better understand how to do more of what really matters academically, and to avoid spending precious time on ‘shelf-bending’ publications that no one else in academia cites or uses.

Is this all pie-in-the sky? Is it just a dream to hope that even at this late stage we might give up the cumbersome and expensive REF 2013 process and transition instead to a modern, digital, open-source and open-book method for reporting the UK’s research performance? Well, it may be too late for 2013, but political life has seen stranger and faster changes than recommended here. And the digital census alternative to REF’s deformed ‘eyeball everything once’ process is not going to go away. If not in 2013, then by 2018 the future of research assessment lies with digital self-regulation and completely open-book processes.

Note: The ‘Publish or Perish’ programme recommended here is a small piece of software downloaded onto your PC that allows every academic to keep easily up to date on how their publications are being cited. It is available free on http://www.harzing.com/pop.htm

The software was developed by Anne-Wil Harzing, Professor of International Management at the Faculty of Business and Economics in the University of Melbourne, Australia. As well as publishing extensively in the area of international and cross-cultural management, Professor Harzing is a leading figure in internet-age bibliometrics.

On 13 June, the LSE Public Policy Group is holding a Conference on Investigating Academic Impact. This one day conference will look at a range of issues surrounding the impact of academic work on government, business, communities and public debate. We will discuss what impact is, how impacts happen and innovative ways that academics can communicate their work. Practical sessions will look at how academic work has impact among policymaking and business communities. Also how academic communication can be improved and how individual academics can easily start to asses their own impact.

The event is free to attend but registration is essential. Please contact the PPG team via Impactofsocialsciences@lse.ac.uk.

I am very much in favor of using quantitative assessment of this sort. Unfortunately ERA has taken a further step away from this in the 2012 ERA plans. They are dropping the ranked journal list that was used in the humanities and most social sciences in addition to peer review and so it only be peer review. In psychology and the natural sciences they use Scopus to do citation analysis. Currently, I think Google Scholar is very noisy. I think it would be challenged so much by people who disagree with the results that this wouldn’t really help the process a lot. PoP does make GS more user friendly. But for people with common names it can be hard to come up with a reasonably full citation count. Even for me, it requires reassembling GS results in a spreadsheet to merge to different searches, which I can’t see a way to do inside PoP. DI Stern is a unique name but D Stern is common. Quite a lot of my citations are listed with just one initial. Searching D Stern without limiting subject area produces too many results. Limiting it to “business” produces useable results. But limiting DI Stern to “business” misses a lot of my work”. So the two separate searches need to be merged offline. Luckily I don’t have a really common name like Liu (like my wife) or Smith etc.