Jobs, grants, prestige and career advancement are all partially based on an admittedly flawed concept: the journal Impact Factor. Impact factors have been becoming increasingly meaningless since 1991, writes George Lozano, who finds that the variance of papers’ citation rates around their journals’ IF has been rising steadily.

Jobs, grants, prestige and career advancement are all partially based on an admittedly flawed concept: the journal Impact Factor. Impact factors have been becoming increasingly meaningless since 1991, writes George Lozano, who finds that the variance of papers’ citation rates around their journals’ IF has been rising steadily.

Thomson Reuters assigns most journals a yearly Impact Factor (IF), which is defined as the mean citation rate during that year of the papers published in that journal during the previous 2 years. The IF has been repeatedly criticized for many well-known and openly acknowledged reasons. However, editors continue to try to increase their journals’ IFs, and researchers continue to try to publish their work in the journals with the highest IF, which creates the perception of a mutually-reinforcing measure of quality. More disturbingly, although it is easy enough to measure the citation rate of any individual author, a journal’s IF is often extended to indirectly assess individual researchers. Jobs, grants, prestige, and career advancement are all partially based on an admittedly flawed concept. A recent analysis by myself, Vincent Larivière and Yves Gingras identifies one more, perhaps bigger, problem: since about 1990, the IF has been losing its very meaning.

Impact factors were developed in the early 20th century to help American university libraries with their journal purchasing decisions. As intended, IFs deeply affected the journal circulation and availability. Even by the time the current IF (defined above) was devised, in the 1960s, articles were still physically bound to their respective journals. However, how often these days do you hold in your hands actual issues of printed journals?

Until about 20 years ago, printed, physical journals were the main way in which scientific communication was disseminated. We had personal subscriptions to our favourite journals, and when an issue appeared in our mailboxes (our physical mailboxes), we perused the papers and spent the afternoon avidly reading the most interesting ones. Some of us also had a favourite day of the week in which we went to the library and leafed through the ‘current issues’ section of a wider set of journals, and perhaps photocopied a few papers for our reprint collection.

Those days are gone. Now we conduct electronic literature searchers on specific subjects, using keywords, author names, and citation trees. As long as the papers are available digitally, they can be downloaded and read individually, regardless of the journal whence they came, or the journal’s IF.

This change in our reading patterns whereby papers are no longer bound to their respective journals led us to predict that in the past 20 years the relationship between IF and papers’ citation rates had to be weakening.

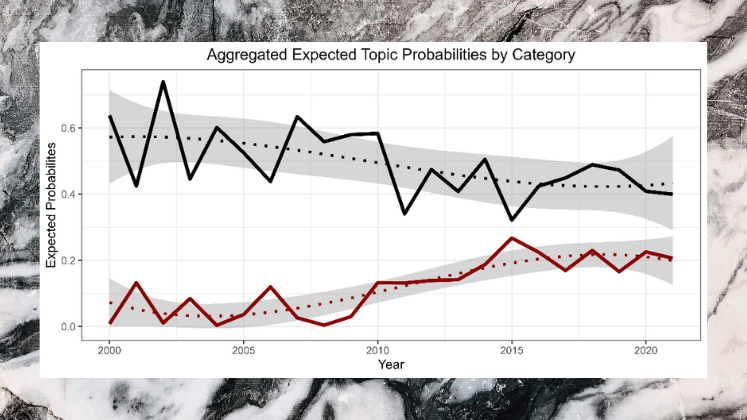

Using a huge dataset of over 29 million papers and 800 million citations, we showed that from 1902 to 1990 the relationship between IF and paper citations had been getting stronger, but as predicted, since 1991 the opposite is true: the variance of papers’ citation rates around their respective journals’ IF has been steadily increasing. Currently, the strength of the relationship between IF and paper citation rate is down to the levels last seen around 1970.

Furthermore, we found that until 1990, of all papers, the proportion of top (i.e., most cited) papers published in the top (i.e., highest IF) journals had been increasing. So, the top journals were becoming the exclusive depositories of the most cited research. However, since 1991 the pattern has been the exact opposite. Among top papers, the proportion NOT published in top journals was decreasing, but now it is increasing. Hence, the best (i.e., most cited) work now comes from increasingly diverse sources, irrespective of the journals’ IFs.

If the pattern continues, the usefulness of the IF will continue to decline, which will have profound implications for science and science publishing. For instance, in their effort to attract high-quality papers, journals might have to shift their attention away from their IFs and instead focus on other issues, such as increasing online availability, decreasing publication costs while improving post-acceptance production assistance, and ensuring a fast, fair and professional review process.

At some institutions researchers receive a cash reward for publishing a paper in journals with a high IF, usually Nature and Science. These rewards can be significant, amounting to up to $3K USD inSouth Korea and up to $50K USD inChina. InPakistan a $20K reward is possible for cumulative yearly totals. In Europe andNorth America the relationship between publishing in high IF journals and financial rewards is not as explicitly defined, but it is still present. Job offers, research grants and career advancement are partially based on not only the number of publications, but on the perceived prestige of the respective journals, with journal “prestige” usually meaning IF.

I am personally in favour of rewarding good work, but the reward ought to be based on something more tangible than the journal’s IF. There is no need to use the IF; it is easy enough to obtain the impact of individual papers, if you are willing to wait a few years. For people who still want to use the IF, the delay would even make it possible to apply a correction for the fact that, independently of paper quality, papers in high IF journals just get cited more often. So, of two equally cited papers, the one published in a low IF journal ought to be considered “better” than the one published in an elite journal. Imagine receiving a $50K reward for a Nature paper that never gets cited! As the relation between IF and paper quality continues to weaken, such simplistic cash-per-paper practices bases on journal IFs will likely be abandoned.

Finally, knowing that their papers will stand on their own might also encourage researchers to abandon their fixation on high IF journals. Journals with established reputations might remain preferable for a while, but in general, the incentive to publish exclusively in high IF journals will diminish. Science will become more democratic; a larger number of editors and reviewers will decide what gets published, and the scientific community at large will decide which papers get cited, independently of journal IFs.

Note: This article gives the views of the author(s), and not the position of the Impact of Social Sciences blog, nor of the London School of Economics

A useful article – but given the shift in publishing toward database access providing alternatives to single journal subscription has been occurring for sometime – it would be useful if you combined the research with other indexes and matrices e.g. eigen factor, H-Index etc – the collective prowess of the available metrics is also significant.

Readers might also be interested in “What do the scientists think about the impact factor?”

http://www.springerlink.com/content/mk1w6m8117kl374n/

What if Impact was actually about impact on society at large? Surely, if the taxpayer is paying for research, quality should at least partially measure ROI, whether in cash, in kind or in other, less tangible factors: social cohesion, efficiency, quality of life.

Yes, that is exactly what impact means. And yes, the relationship between investment and impact is often ignored. I wrote another paper about that a while ago.

http://www.georgealozano.com/papers/mine/Lozano2010-IPD.pdf

“Impact factors were developed in the early 20th century…” Really?

1927 to be exact. The currently most widely used IF came later.

ARCHAMBAULT, É. & LARIVIÈRE, V. (2009). History of the journal impact factor: contingencies and consequences. Scientometrics 79, 635-649.

Also IF and citations depend on the Field of study. For example a paper in medical field will tend to be cited more than a paper on Fermentation.

“journals might have to shift their attention away from their IFs and instead focus on other issues, such as increasing online availability, decreasing publication costs while improving post-acceptance production assistance, and ensuring a fast, fair and professional review process.”

Um, yeah. As it has been for decades.

A great and interesting analysis that is based on an objective assasment. However, as it is more difficult to get published in high IF journals, because of peers wish to see substantial contribution and knowledge of the field, it remains a quality mark to get published in high IFs regardless of the poor objective fit and what it originally stood for.