Qualitative and quantitative research methods have long been asserted as distinctly separate, but to what end? Howard Aldrich argues the simple dichotomy fails to account for the breadth of collection and analysis techniques currently in use. But institutional norms and practices keep alive the implicit message that non-statistical approaches are somehow less rigorous than statistical ones.

Qualitative and quantitative research methods have long been asserted as distinctly separate, but to what end? Howard Aldrich argues the simple dichotomy fails to account for the breadth of collection and analysis techniques currently in use. But institutional norms and practices keep alive the implicit message that non-statistical approaches are somehow less rigorous than statistical ones.

Over the past year, I’ve met with many doctoral students and junior faculty in my travels around the United States and Europe, all of them eager to share information with me about their research. Invariably, at every stop, at least one person will volunteer the information that “I’m doing a qualitative study of…” When I probe for what’s behind this statement, I discover a diversity of data collection and analysis strategies that have been concealed by the label “qualitative.” They are doing participant observation ethnographic fieldwork, archival data collection, long unstructured interviews, simple observational studies, and a variety of other approaches. What seems to link this heterogeneous set is an emphasis on not using the latest high-powered statistical techniques to analyze data that’s been arranged in the form of counts of something or other. The implicit contrast category to “qualitative” is “quantitative.” Beyond that, however, commonalities are few.

Here I want to offer my own personal reflections on why I urge abandoning the dichotomy between “qualitative” and “quantitative,” although I hope readers will consult the important recent essays by Pearce and Morgan for more comprehensive reviews of the history of this distinction. For a variety of reasons, some people began making a distinction more than four decades ago between what they perceived as two types of research – – quantitative and qualitative – – with research generating data that could be manipulated statistically seen as generally more scientific.

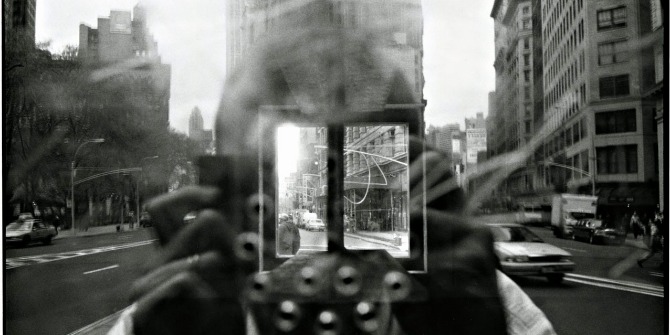

Image credit: Libby Levi for opensource.com via flickr.com (CC BY-SA)

Image credit: Libby Levi for opensource.com via flickr.com (CC BY-SA)

I’ve endured this distinction for so long that I had begun to take it for granted, a seemingly fixed property in the firmament of social science data collection and analysis strategies. However, I’ve never been happy with the distinction and about a decade ago, began challenging people who label themselves this way. I was puzzled by the responses I received, which often took on a remorseful tone, as if somehow researchers had to apologize for the methodological strategies they had chosen. To the extent that my perception is accurate, I believe their tone stems from the persistent way in which non-statistical approaches have been marginalized in many departments. However, it also seemed as though the people I talked with had accepted the evaluative nature of the distinction. As Lamont and Swidler might say, these researchers had bought into “methodological tribalism.”

Such responses upset me so much that I have now taken to asking people, so, you are saying you don’t “count” things? And, accordingly, you do research that “doesn’t count”?!

Why would any researcher accept such second-class status for what they do? Cloaking one’s research with the label of “qualitative” implicitly contrasts it with a higher order and more desirable brand of research, labeled “quantitative.” This is nonsense, of course, for several reasons.

First, methods of data collection do not automatically determine methods of data analysis. Information collected through ethnography (see Kleinman, Copp, and Henderson), perusal of archival documents, semi-structured interviews, unstructured interviews, study of photographs and maps, and other methods that might initially yield non-numerical information can often be coded into categories that can subsequently be statistically manipulated. For example, the recording and processing of ethnographic notes by programs such as NVivo and Atlas.ti yields systematic information that can be coded, classified, and categorized and then “counted” in a variety of ways. Thus, an ethnographer interested in a numerical indicator of social status within an emergent group could count the number of instances of deferential speech directed toward a (presumed) high status person or the number of interruptions made by (presumed) high status people into the conversations of others. Note that the meaning of what has been observed derives not from “counting” something but rather from understanding how to interpret what was observed, with the counts helping to judge the strength of the interpretation. The interpretation depends upon a researcher’s understanding of the social context for what was observed.

I believe some of the controversy over what standards to apply to assessing so-called “qualitative” research stems from observers confounding methods of data collection with methods of data analysis. For example, Lareau was quite critical of LaRossa’s suggestions to authors and reviewers of “qualitative” manuscripts because she felt that he had imported some terms from “quantitative” research that were inappropriate for what she viewed as good “qualitative” research, e.g. terms such as “hypothesis” and “variables.” She saw his suggestions as imposing “a relatively narrow conception of what it means to be scientific.” Although she supported his call to improve our analytic understanding of social processes, she also seemed to view data collection and data analysis as inextricably intertwined in the research process. I do not share this view. Even in grounded theory approaches, practitioners distinguish between the collection and analysis of data, although there might be very little time lag between “collection” and “analysis.”

To be clear: not all information collected through the various methods I’ve described can be neatly ordered and classified into categories subject to statistical manipulation. Forcing interpretive reports into a Procrustean bed of cross tabulations and correlations makes no sense. Skillful analysts working with deep knowledge of the social processes they are studying can construct narratives without numbers. It all depends on the question they want to answer, and how.

Second, standards of evidence required to support empirical generalizations do not differ by the method of data collection. Researchers who claim “qualitative” status for their research must meet the same standards of validity and reliability as other researchers. Regardless of whether information is collected through highly structured computerized surveys or semi structured interviewing in the field, researchers must still demonstrate that their indicators are valid and reliable. Ethnographers must provide sufficient information via “thick description” to convince readers that they were in a position to actually observe the interaction they are interpreting, just as demographers using federal census data must convince readers that the questions they are interpreting were framed without bias.

When did this pattern of apologies for “qualitative” research start? Based on my own experience and Morgan’s review, I would say “something happened” in the late 1970s. Back in 1965, when I was doing a one-year ethnography class with John Lofland, at the University of Michigan, no one used the term “qualitative research.” My colleagues in the course – all of whom were doing field-work based MA theses, as I recall – might have described their work as doing “grounded theory,” as we were using a mimeographed version of Glaser and Strauss’s book. The ethnography class was an alternative track to the Detroit Area Study survey research course, and neither course was described as a substitute for the required statistics courses. Field work and survey research were just alternative ways of collecting data.

I published several articles from that ethnography class, including a paper in the inaugural issue of Urban Life and Culture, begun in 1972. That journal is now called the Journal of Contemporary Ethnography. As I recall, no one ever asked me why I was doing “qualitative research,” nor did I ever describe it in those terms. The journal Qualitative Sociology began in 1978 and so I assume the phrase “qualitative sociology” was beginning to percolate into sociological writings around that time. In 1983, Lance Kurke and I published a non-participant observational study of four executives in Management Science, replicating Henry Mintzberg’s research from the early 1970s. We described it as a “field study” during which we had spent one week with each executive and mentioned that we had also conducted semi-structured interviews with them. The phrase “qualitative research” did not appear in the article. The terms “field study” and “non-participant observation” captured perfectly how the project was carried out.

Am I “blaming the victims” for their continuing to use the labels “qualitative” and “quantitative”? To be clear, there are several institutional factors that explain why some people persist in using this term, and they clearly transcend individual characteristics, as I have found them used not only in North America but also globally. Mario Small offered at least three explanations. First, the availability of large data sets has made it easier for researchers to conduct statistical analyses in their research. Second, journal reviewers apply inappropriate standards when they try to evaluate research that uses ethnographic or other less frequently used data collection techniques. Third, foundations and agencies have gravitated toward research labeled as “quantitative” because they see it as higher prestige or more policy relevant. My colleague, Laura Lopez-Sanders, noted a fourth possible reason: departments that offer a “methods sequence” often overlook or downplay ethnographic and other methods that are seen as not leading to easily quantifiable data for statistical analysis. Students pick up on the implicit message that statistical methods carry a higher priority than other methods of analysis.

To the extent that institutional norms and practices keep alive the implicit message that there really are “quantitative” and “qualitative” methods, they will be available for use by graduate students and junior faculty. Their availability, however, does not mandate their use. The point of this blog post is to make readers more reflexive about how they choose to describe their work.

So, if you are doing an ethnography, constructing a narrative using historical records, rummaging through old archives, building agent based models, or doing just about anything else, for that matter, tell that to the next person who asks what kind of research you do. Don’t automatically say “I’m doing qualitative research.” You might want to describe in some detail what data collection and data analysis methods you are actually using. Explain the fit between the questions you’re asking and the type of empirical evidence you are gathering and how you are analyzing it. If the person says, “oh, you are doing qualitative research,” tell them you don’t know what they mean. You’re just doing good research.

This piece originally appeared on the Work in Progress blog of the American Sociological Association’s Organizations, Occupations, and Work Section and is reposted with permission.

Note: This article gives the views of the author, and not the position of the Impact of Social Science blog, nor of the London School of Economics. Please review our Comments Policy if you have any concerns on posting a comment below.

Howard E. Aldrich is Kenan Professor of Sociology, Adjunct Professor of Business at the University of North Carolina, Chapel Hill, Faculty Research Associate at the Department of Strategy & Entrepreneurship, Fuqua School of Business, Duke University, and Fellow, Sidney Sussex College, Cambridge University. His main research interests are entrepreneurship, entrepreneurial team formation, gender and entrepreneurship, and evolutionary theory.

I share the sentiments expressed by the author as I have had the difficulty of explaining ‘action research’ to colleagues who carry out ‘traditional quantitative research’. Action research may utilize many forms of research within the two areas of both qualitative and quantitative research. Should we abandon the ‘Q’ words then or should we combine them to achieve the benefits that both provide?What are the feelings on ‘mixed methods’?

Great article discussing the importance of ‘qualitative’ research. Whilst i agree on the importance of rigour, when ‘qualitative’ research is paradigmatically different from quantative research, using language from quantitative studies like validity and reliability actually reiforces the (ostensive) primacy of quantitative research. Other evaluative criteria such as transparency, theoretical contribution etc. are arguably better suited to ‘qualitative’ research that takes a hermeneutic or critical lens.

I have my concerns when terms are rejected only because they are used in quantitative research. Reliability might be difficult to implement in frameworks that question intersubjectivity, but validity asks for whether we are examining what we actually want to analyze (in simple terms). I find this highly relevant for any empirical research. Qualitative research should part ways with quantitative research when it comes to ways to assess validity (e.g., Onwuegbuzie, Anthony and Beth L. Leech (2007): Validity and Qualitative Research: An Oxymoron? Quality and Quantity 41 (2): 233-249. doi: 10.1007/s11135-006-9000-3)

I think the terms “quantitative” and “qualitative” carry important information about the basis of one’s (causal) arguments. In short and simplifying, they communicate whether inferences are based on a number (or some numbers) summarizing data, or whether they are based on a diverse body of evidence. Besides, the problem that Aldrich sees, which I agree is there, does not disappear if we stop using the Q words. Without them, it becomes a small-n vs large-n distinction (n being the number of cases) or a depth vs breadth distinction. The people that now favor quantitative over qualitative research then favor breadth and large-n studies over depth and small-n studies. For these reasons, I see more benefits than downsides in using the Q words.

While it makes sense to say that the use of multiple approaches to research design would enlighten us to see the multiple dimensions of knowledge, the distinctions between qualitative and quantitative ‘traditions’ are too broad to reconcile. A reconciliation is possible only if both the traditions agree on the nature of truth and the best way to access it – the war has been on for quite sometime with each side self-declaring victory from day 1.

Interesting topic. I totally agree. I tried to disentangle qual/quant approach, method, data, analysis etc n the publication below. It was extremely difficult to move beyond the qual/quant binary without reverting to that terminology.The devil, I would argue is in the detail (or the understanding) rather than in the terms used.

https://www.researchgate.net/publication/262380458_Qualitative_Research_Rules__Using_Qualitative_and_Ethnographic_Methods_to_Access_the_Human_Dimensions_of_Technology

Aldrich based his views on the fact that qualitative software packages tend to translate categories and codes into quantities, thus making decisions about results contingent upon the quantities.Aldrich should also consider a situation where the data collected through ethnography, for instance, is not analysed using any software, but rather using an approach of merely synthesizing the result.

interestingly , the value that in my training the distinction between qualitative and quantitative approaches had, is before a descriptive value ( production methods and data analysis) , a political value : quantitative research, at least here in South America , is associated to an objectivist research often involving an invisibilization of epistemological and political questions (ie , the effects of the conceptual categories in use or the investigation as a whole). In that sense it seems that the first pedestal is taken by qualitative aproaches ( although their ability to influence the public debate remains limited). In any event, I share the idea to stop using this distinction to describe an investigation, but only at the conversational level with our colleagues, because at the time of writing something that same approach would be likely to lead to a operationalism without depths.

I have a few reactions to this post. The first is to the point about standards of validity and reliability being the same across methodologies, i.e., the obligation of the researcher to convince the reader that the researcher was in a position to assess the phenomenon for which s/he claims to have findings. This is true for both qual and quant.

Per Aldrich’s point about qualitative data being countable, I also thought of Hodson’s Dignity at Work book (and several other papers using the ethnography database he and colleagues created). What a powerful dataset that has been for building theory and evidence in Orgs and Work!

To his point about “the interpretation depends upon a researcher’s understanding of the social context for what was observed” – this reminds me of the philosophy behind calibrating measures in QCA. The methodological precedent there is, the researcher selects a threshold/cut point for being “in” or “out” of the given condition, drawing on his/her knowledge of the context to justify it.

Finally, a related pattern I thought of that seems to signal institutional norms in the inferior treatment of qual methods is that in management journals, the ordering of qual-then-quant in journal articles is the norm. “Qual,” as it were, can never “prove the day;” it can only generate questions. Which I think is wrong. See Kaplan’s (2015) paper on the ability of both quant and qual methods to generate questions, which to me suggests that there is no reason why mixed methods studies need to always follow the same ordering.