The JournalismAI Fellowship began in June 2022 with 46 journalists and technologists from news organisations globally collaborating on using artificial intelligence to enhance their journalism. At the halfway mark of the 6-month long programme, our teams of fellows describe their journey so far, the progress they’ve made, and what they learned along the way. In this blog post, you’ll hear from team Parrot.

Parrot is a tool and methodology to help journalists identify and measure the spread of manipulated narratives from state-controlled media. Using AI we will develop an early warning system that clusters and classifies state media generated text and then detects coordinated efforts at its dissemination.

Parrot is being developed as part of a collaboration between The Times & Sunday Times and Ippen Media in the context of the JournalismAI Fellowship.

So far, we’ve hit a few walls. We’ve struggled to gain academic access to Twitter in order to source data that would enable us to examine how narratives are disseminated on social media. In order to aim for achievable goals, we’ve refined the scope of our project, for example by beginning our focus on Russian state media content in English.

But we’ve also had some successes. We’ve created a narrative clustering tool that successfully collates articles on similar topics and rates how the linguistic features within them are propagandistic in nature. Our dataset of over 25,000 articles from Russian media that mention Ukraine was clustered into topics using Top2Vec. The articles within each topic were then fed into a linguistic propaganda model that indicates when sections of the text contain propagandistic language. Using a straightforward count of how often propagandistic techniques are identified we are able to create a metric for how propagandistic each topic is.

We are going through a process of evaluating how well this model is working. This has included a manual exercise of identifying which of the 194 discovered topics we would consider to be narratives. For example, in terms of analysing and deciding whether or not clustered articles are examples of a propagated narrative, and if so, what that narrative is.

We’ve been lucky to have feedback from various experts, from people specialising in natural language processing, disinformation dissemination, and topic modelling.

One of the core issues we have faced after delving into the project, which was raised by one of the project facilitators, Jeremy Gilbert – who leads the Knight Lab at Northwestern University – is the non-trivial nature of what we are trying to achieve. There is a lot of subjectivity surrounding how you determine what is or isn’t a manipulated narrative and there are a lot of value judgements that a human would need to make.

Gilbert offered a useful principle for all those involved in AI projects to keep in mind: if a human cannot identify the thing or do the task you are wanting a system to do, then it is very unlikely you will have success in building that system. The ability to identify concrete examples of the problem you are solving is a key, and often under-valued, aspect of AI-related research and development.

We’ve taken this in our stride, and are being mindful of how we inject our own subjective value judgments when developing our methodology.

As a next step, we plan to analyse social media data and tie it to our narrative clustering model. Afterwards, we’ll develop and implement a solution to visualise propagation patterns. Hopefully, this will serve as a precursor to our early warning system.

The Parrot team is formed by:

- Venetia Menzies, Data and Digital Journalist, The Times & Sunday Times (UK)

- Ademola Bello, Data Journalist, The Times & Sunday Times (UK)

- Alessandro Alviani, Product Owner, Ippen Media (Germany)

- Simone Di Stefano, Data Engineer, Ippen Media (Germany)

Do you have skills and expertise that could help team Parrot? Get in touch by sending an email to Fellowship Manager Lakshmi Sivadas at lakshmi@journalismai.info.

JournalismAI is a global initiative of Polis and it’s supported by the Google News Initiative. Our mission is to empower news organisations to use artificial intelligence responsibly.

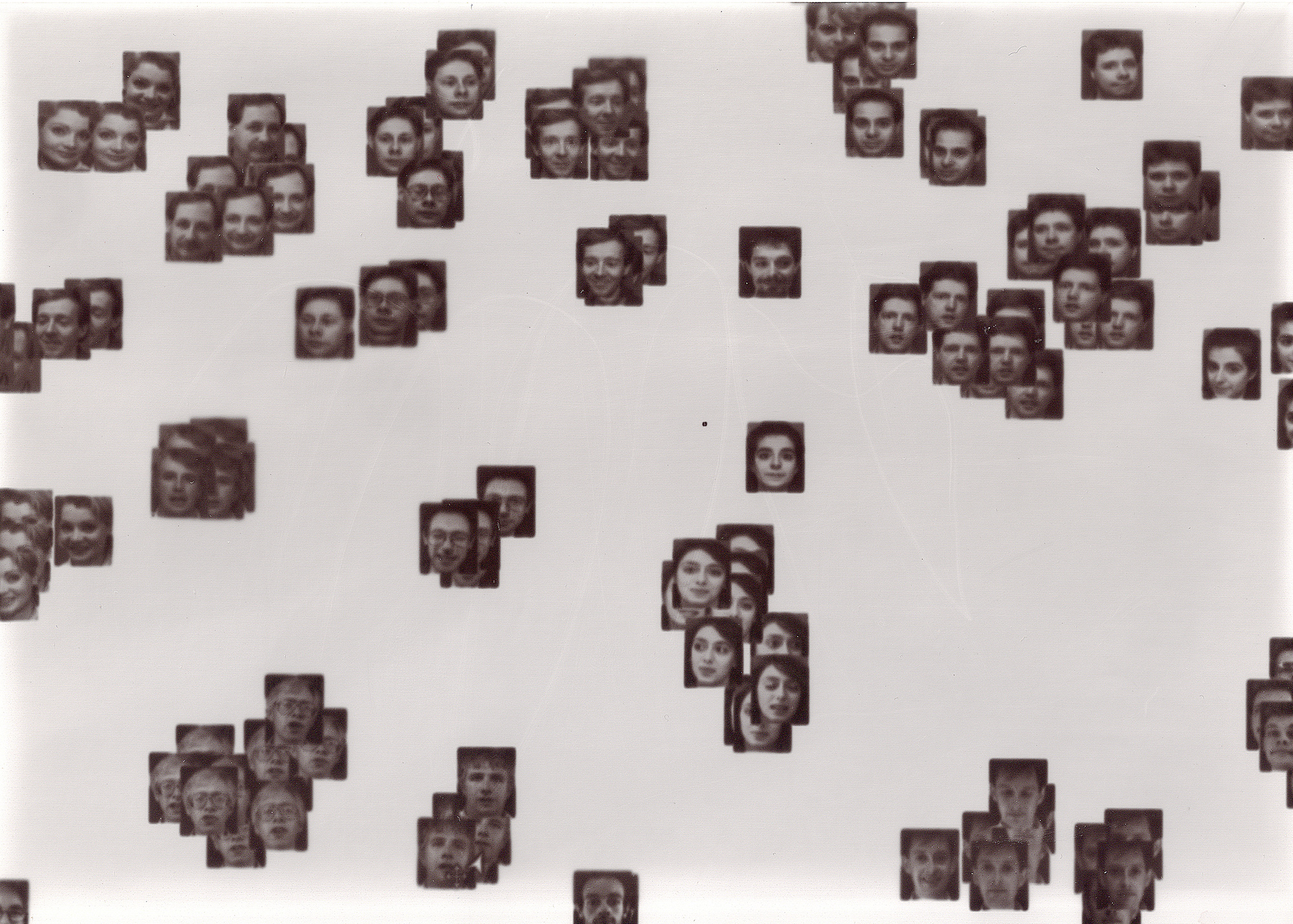

Header image: Alexa Steinbrück / Better Images of AI / Explainable AI / CC-BY 4.0