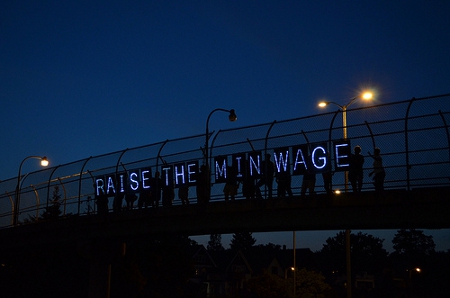

Recent months have seen President Obama make a renewed push to address inequality in the U.S., especially via one policy lever he has focused on previously- raising the minimum wage. For many, conventional economic wisdom states that raising the minimum wage costs jobs, as employers are less willing to take on staff at higher rates of pay. Alan Manning takes a close look at the economics and the evidence of these claims, finding that one of their basic assumptions, that labor markets are highly competitive, does not hold. He argues that in light of this, and of empirical evidence from academic studies of wages and employment, it is very difficult to claim that wages have a significant effect on employment in either direction.

Recent months have seen President Obama make a renewed push to address inequality in the U.S., especially via one policy lever he has focused on previously- raising the minimum wage. For many, conventional economic wisdom states that raising the minimum wage costs jobs, as employers are less willing to take on staff at higher rates of pay. Alan Manning takes a close look at the economics and the evidence of these claims, finding that one of their basic assumptions, that labor markets are highly competitive, does not hold. He argues that in light of this, and of empirical evidence from academic studies of wages and employment, it is very difficult to claim that wages have a significant effect on employment in either direction.

A generation ago, the vast majority of economists would have said that a rise in the minimum wage inevitably costs jobs. It must, they would have said, if a basic principle of economics – that the demand for labor falls as wages rise – is correct. In the current debate over the federal minimum wage, many opponents of the increase have argued that any rise poses too great of a risk to the fragile economic recovery. However, recent research shows this truism to be over-simplified and not reflective to the realities of the labor market. Rather than automatically reducing employment, an increased minimum wage presents mixed outcomes.

Until the mid-1990s, almost all studies of the minimum supported the idea of an unwelcome trade-off between wage regulation and employment. But the cozy consensus was shattered by the research of two economists, David Card and Alan Krueger, then both at Princeton. They argued that the actual evidence linking the minimum wage to job losses was weak. More important, they offered new analyses concluding that the minimum wage – at the levels observed in the United States – had no effect on employment, and might even raise it! Their seminal study compared employment in fast-food restaurants in two adjacent states, New Jersey and Pennsylvania, after New Jersey raised its minimum wage.

It’s important to bear in mind – certainly Card and Krueger did – that there is still universal agreement that employment is sensitive to a wage floor when it reaches some level. But the two economists were equally convinced by their research that, in the American context, modest increases in the minimum had no effect on jobs.

To say the Card-Krueger research generated controversy is an understatement. Its publication coincided with a debate over raising the federal minimum wage, so the ensuing academic dispute was inextricably mixed with politics. Other academics testified to Congress that the Card-Krueger conclusion amounted to a rejection of the economist’s version of the theory of gravity – and that evidence of antimatter should be treated with great skepticism.

The battle lines haven’t changed much since then. For example, the White House’s 2013 fact sheet on increasing the minimum wage approvingly cited a study by Arindrajit Dube, T. William Lester and Michael Reich, economists at the University of California, Berkeley, comparing employment growth across states (essentially a souped-up version of the earlier comparison of New Jersey and Pennsylvania) and concluding that differences in the minimum among states had no effect. This study has since been criticized by two other economists, David Neumark of the University of California, Irvine, and William Wascher of the Federal Reserve Board, who have long been defenders of the conventional wisdom on the minimum wage. Their arguments are technical, concluding that a better analysis of the same data supports the old view that the minimum wage destroys jobs.

What is an outsider, especially one lacking the economist’s statistical weaponry, to make of all this? I think the simplest and most persuasive explanation for radical differences in researchers’ conclusions is that the differences in employment being measured aren’t large – and that it is often hard to disentangle small effects from all the other forces affecting employment.

In the circumstances, I think it is best to focus on the studies with the most accurate, fine-grained data, and increasingly those are studies with access to payroll information from individual firms. In 2011, Barry Hirsch, Bruce Kaufman and Tatyana Zelenska used data from a chain of fast-food restaurants in Georgia. They analyzed the impact of the rise in the federal minimum wage in 2007-9, exploiting the fact that this rise had a bigger impact in low-wage regions (often rural areas) within the state. They found clear effects on earnings, but no effects on employment.

In spite of this accumulating weight of empirical evidence, it is still very common to find economists falling back on the argument that a minimum wage must cost jobs because demand curves for labor inevitably slope downward. Faced with a conflict between the evidence and 20th-century economic models, they reject the evidence rather than the theory – not an ideal template for scientific endeavor. But there are, in fact, uncomplicated theoretical reasons why the minimum wage set at the levels seen in the United States has little or no effect on employment. Hence the problem may be with the economics all too often taught as dogma.

What’s wrong with economics 101?

Bare-bones models of market behavior may assume away important elements of reality. First, the increase in total labor costs associated with a given increase in the legal minimum wage is often considerably smaller than the numbers suggest. As the minimum wage rises and work becomes more attractive, labor turnover rates and absenteeism tend to decline. Moreover, the sacrifice associated with the consequences of losing a job rises; so, arguably, workers are inclined to work a bit harder and need less monitoring. Absenteeism and shirking are not trivial problems in many low-wage labor markets, so one can reasonably expect to see a material reduction in the associated costs as the minimum wage rises.

Of course, an employer could voluntarily choose to pay $9 an hour if net labor costs actually fell as wages rose and one would expect some to adopt higher wages without government prodding if it were, in fact, a win-win. So a reasonable guess here is that these offsetting economies reduce, but do not eliminate, the impact of a rise in wage rates.

Then there’s the gap between employer perception and reality. Individual employers often view a rise in wages (or other costs) with horror, assuming it will drive them out of business. But they’re all too often implicitly assuming that they alone will suffer the cost inflation – that changes in the minimum wage will leave them at a disadvantage with respect to competitors.

In reality, businesses generally try to pass a rise in the minimum wage (or sales taxes or anything else that raises the cost of doing business for all) on to their customers. So with fast food, one would expect firms to raise prices. In that circumstance, the effect on employment is only through the effect of a fall in sales of the product, which may well be minimal. Ask yourself: do you eat fewer Whoppers if the price goes up a little at the same time as the price of Big Macs (and Taco Bell Burrito Supremes) goes up a little? Do you even keep track of the price changes?

Theory and reality reconciled

Actually, there is a more fundamental reason why one cannot find the job losses predicted by standard-issue economic theory. The key assumption, that labor markets are highly competitive, is often wrong. The view of the labor market that underlies Economics 101 is not one that many people would recognize. For in this hypothetical world, losing a job is no big deal because finding an identical job is no harder than discovering that the local 7-Eleven is out of Coke and driving around the block to a Circle K for a six-pack. But that is not most people’s experience of labor markets. The reality is that competition for workers is not as strong as many economists would have you believe. An employer who cuts wages will find that most employees are unhappy, but that few will just walk out the door. It thus follows that it may make economic sense for employers to pay workers less than the marginal worker adds to revenues.

This completely alters one’s expectations about how a change in the minimum wage will affect the labor market. In this world, a change will not necessarily price the marginal worker out of his job.

Consider, too, that the higher minimum will increase the supply of labor, so the firm may actually find it easier to recruit workers. The bottom line: if one drops the assumption that the labor market is fully competitive, an increase in the minimum wage can lead to a decrease or an increase in total employment. The direction can only be discovered through observation. And as we’ve seen, the empirical evidence does not suggest much effect on employment at the levels of the minimum wage seen in the United States.

Although many people find the stylized account of how labor markets function that’s presented here to be credible for skilled workers, they still doubt it is relevant for minimum-wage workers – the archetypal teen mom flipping burgers or bussing tables. Surely, the argument goes, there are so many potential employers for this sort of labor that one should be able to switch jobs easily. But the reality that some low-skill openings go begging actually tells us that the constraint on employment may be as much labor supply as labor demand.

Economists often have a blind spot on this point. Indeed, I am baffled by their degree of resistance. For example, last year Christina Romer, a former chairwoman of President Obama’s Council of Economic Advisors (and an analyst with impeccable credentials as a champion of the poor), wrote critically of the proposal to raise the minimum wage, arguing that competition between employers was sufficiently robust to push wages close to the marginal product of labor. She seemed trapped in the view that the only exceptions were cases in which large employers dominated a local market – say, a coal mine in a remote corner of Appalachia. I believe that, in most circumstances, the market power of employers derives from the fact that it’s hard for workers to change jobs even when there are alternative employers in abundance.

Consider an irony. The financial crisis has rightly shaken the beliefs of many economists that financial markets can do no wrong because they are disciplined by competition. But faith in the competitive nature of labor markets seems unshakable in the teeth of evidence to the contrary. Markets (labor markets as well as financial markets) need to be regulated to work well, and the minimum wage is a legitimate weapon in the regulatory arsenal. Next time you read that minimum wages hikes inevitably destroy jobs – that you don’t need an econometrician to tell which way the labor market blows – remember that economic theory is no better than the veracity of the assumptions on which it rests.

This article is based on a paper from the Milken Institute Review.

Please read our comments policy before commenting.

Note: This article gives the views of the author, and not the position of USApp– American Politics and Policy, nor of the London School of Economics.

Shortened URL for this post: http://bit.ly/1g44G2A

_________________________________________

Alan Manning – LSE Economics

Alan Manning – LSE Economics

Alan Manning is professor of economics at LSE and director of the communities programme in the Centre for Economic Performance at LSE.

Perhaps the reasons we’re not observing major effects is because most are service workers who have to be in situ to provide the service. The jobs left for the poor in America are not ones that can be outsourced to China, because those have already gone. So office cleaners need to be physically at the office. A (modest) increase in wages won’t affect the price of the service, and so won’t affect demand, which is probably somewhat inelastic, but may (or may not) affect the profits of the company providing the cleaning service.