As the 2020 presidential election approaches, there is growing concern over disinformation about the electoral process which may work to undermine the legitimacy of the election’s outcome. In new research, Brian Calfano, Richard Harknett, Gregory Winger, and Jelena Vicic surveyed nearly 9,000 Americans to determine the effect of messaging from Secretaries of State to counter disinformation. They find that attempts to correct disinformation by Secretaries of State about elections are generally ineffective, regardless of whether someone is a Republican or Democratic voter.

As the 2020 presidential election approaches, there is growing concern over disinformation about the electoral process which may work to undermine the legitimacy of the election’s outcome. In new research, Brian Calfano, Richard Harknett, Gregory Winger, and Jelena Vicic surveyed nearly 9,000 Americans to determine the effect of messaging from Secretaries of State to counter disinformation. They find that attempts to correct disinformation by Secretaries of State about elections are generally ineffective, regardless of whether someone is a Republican or Democratic voter.

In the 2020 election, voting procedures have become a breeding ground for disinformation that targets the election process. The goal of this disinformation is not merely to mislead people, but to use election controversies to erode public confidence in the democratic process and undermine the legitimacy of the outcomes it produces. In order to counter such delegitimization campaigns, election officials like Secretaries of State (SoS) must actively engage in proactive campaigns to combat electoral disinformation. In new research, the Center for Cyber Strategy and Policy at University of Cincinnati has tested whether these proactive efforts can counter delegitimization attacks and successfully buoy popular perceptions of election procedures.

Although crisis communication literature stresses the importance of practices like proactive engagement and transparency, it is unclear whether such methods can counter disinformation effects when the target audience is motivated by partisanship and ideology. The dilemma election officials face may be similar to the backfire effect – when given information which corrects disinformation, some people end up believing the original false information even more strongly. Such corrective efforts (i.e., fact checking) may be ineffective when partisan beliefs power perceptions perhaps, in part, because government engagement with the disinformation can lend credence to misleading claims, amplifying its message, and/or undercutting truthful sources. By engaging, there is the danger that SoS and other legitimate officials have their corrective messages lost in the information soup—reinforcing the public’s sense that electoral security is generally in doubt.

Testing whether campaigns to counter disinformation work

Our evaluation comes from a survey-embedded experiment on an opt-in national sample of 8,809 US adults fielded between October 3-5, 2020. The sample, generated by the firm Lucid, is not probability-based, but is weighted to represent the general public along racial, gender, and age demographics.

Our experiment randomly assigned subjects to one of seven conditions. The first group was a pure control consisting of entertainment and non-political stories to provide a baseline. For the second group, we included news stories and tweets concerning a prominent and recurring theory that absentee ballots can be submitted for dead people. To the third and fourth groups, we presented the same “voting dead” information, but with counter-messaging provided by a Secretary of State (SofS). office and modeled on existing communications from current election officials using reassurance or transparency messaging. The 5th, 6th and 7th groups were presented with the same materials as were groups two through four, but we replaced the generic office with a named SofS. Using a named SoS reflects common practice in most US States and added a series of stories about leaked emails from the SoS. Figure 1 is an example of the named SoS (fictitious) from the sixth group.

Figure 1

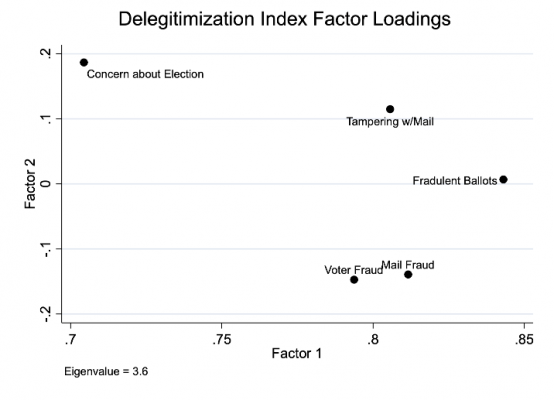

The random assignments we made were not correlated to any of the subjects’ demographic characteristics, including partisanship, and their responses to open-ended questions show that those we surveyed recalled the general subject matter assigned to each group. Our survey questions – taken from a series of publicly released polls on election security issues (SSRS, Pew, Ipsos, and Gallup) – focused on measures of electoral integrity, looking to gauge 1) overall public concern about election security, 2) the ease casting of fraudulent ballots, 3) the frequency of voter fraud, 4) tampering with the mail during the election, and 5) fraud in vote-by-mail options. We scaled responses to these outcome questions between one and four, with higher values representing greater concern about the election or voter fraud of some type (Figure 2).

Figure 2

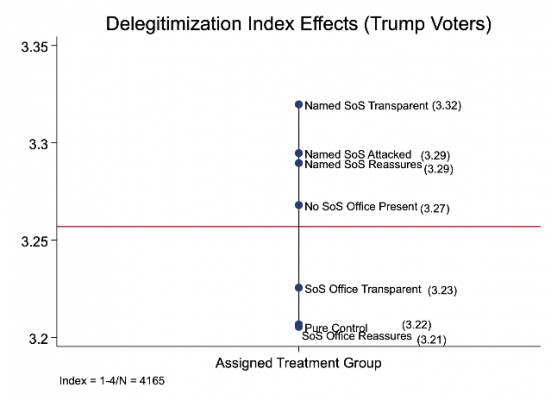

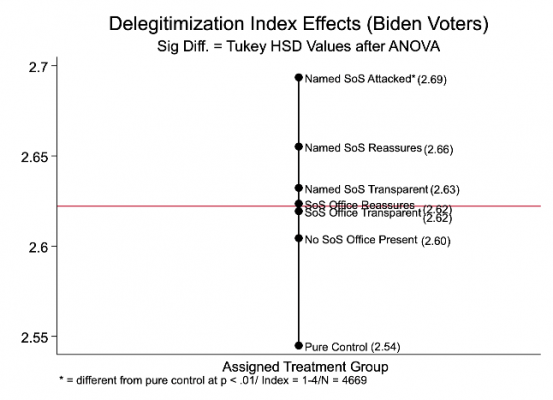

Our first test in Figure 3 focuses on mean differences between the pure control groups and the others. As you can see, the higher Delegitimization Index Score means (which are statistically different from the pure control) are from those surveyed who were exposed to the named Secretary of State (SoS) —with the highest index score from those exposed only to the named experiencing the disinformation attack. But the differences between these three “named” groups are not statistically distinguishable. Meanwhile, respondents who saw the non-named SoS score almost as highly as the named versions, but these are not significantly different from the pure control mean.

“Twitter vote button” by Gage Skidmore is licensed under CC BY SA 2.0

Figure 3

Figure 4

Figure 5

In terms of partisanship effects across self-identified Trump and Biden supporters (including “leaners”) we see a generally similar outcome—with the effects almost the same across groups who were given the disinformation correction (and the effects on those who were given this information by the named SoS were significantly different compared to the pure control for Biden voters). Note, however, the difference in Y axis values. Trump supporters are half a point higher in their Delegitimization Index scores than their Biden counterparts, even as none of the Trump voters in the groups are statistically different. In other words, corrective attempts to deal with disinformation get lost irrespective of who people vote for (but Trump voters have higher delegitimization scores).

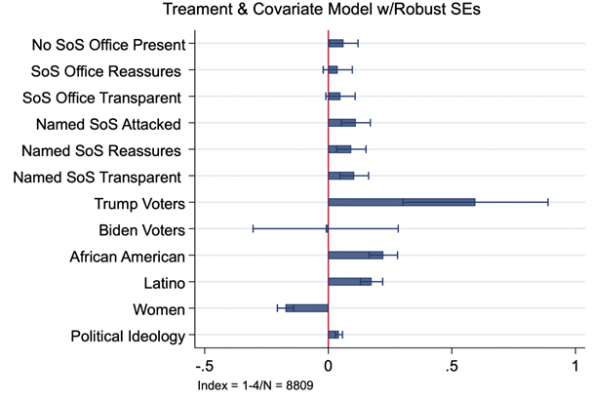

The regression model in Figure 6 featuring the group and demographic variables shows the same pattern of effects.

Figure 6 – Delegitimization Index Scores

While many Secretary of State’s (SofS) offices push back against electoral disinformation, these efforts may fail. To some extent, SoS interventions in our experiment made the situation worse, raising fraud concern scores regardless of vote choice. This speaks to the inherent difficulty government leaders face in correcting disinformation and the underlying challenges democratic societies must address in the face of delegitimization campaigns.

Please read our comments policy before commenting.

Note: This article gives the views of the author, and not the position of USAPP – American Politics and Policy, nor the London School of Economics.

Shortened URL for this post: https://bit.ly/3jA3Ywa

About the authors

Brian R. Calfano – University of Cincinnati

Brian R. Calfano – University of Cincinnati

Brian Calfano is an Associate Professor of Political Science and Journalism at the University of Cincinnati. He has 50 peer-reviewed journal articles to his credit, and is the co-author of God Talk: Experimenting with the Religious Causes of Public Opinion (Temple University Press, 2013), A Matter of Discretion: The Political Behavior of Catholic Priests in the U.S. and Ireland (Rowman and Littlefield, 2017), and Human Relations Commissions: Relieving Racial Tensions in the American City (2020 Columbia University Press).

Richard Harknett – University of Cincinnati

Richard Harknett – University of Cincinnati

Dr. Richard J. Harknett is Professor of International Relations and Head of the Department of Political Science at the University of Cincinnati. He is the author of over forty publications in the area of international relations theory and international security studies, and is the Chair of the Center for Cyber Strategy and Policy at the University of Cincinnati and Co-Chair of the Ohio Cyber Range Institute.

Gregory Winger – University of Cincinnati

Gregory Winger – University of Cincinnati

Dr. Gregory H. Winger is an Assistant Professor in the Political Science Department at the University of Cincinnati. He specializes in cyber security international security, and U.S. foreign policy. His research examines trust-building processes and in particular how collaborative activities, like defense diplomacy, have been used to facility cooperation on emerging security issues.

Jelena Vicic – University of Cincinnati

Jelena Vicic – University of Cincinnati

Jelena Vicic is a PhD candidate at the University of Cincinnati and an Ohio Cyber Range Institute Pre-doctoral Fellow.