With advances in artificial intelligence (AI), it seems that the range of economic activities that can and will be automated is now limitless. Not for the first time, society is both thrilled and fearful of the opportunities. The 1950s were the “Push Button Age”, the 60s brought the “Information Age” and the 1970s the time of the industrial robots, followed by a variety of other “ages”. However, will we really see a time where everything is finally automated or just a continuation of the waves of automation that have been going on for hundreds of years? Waves that so far have always left humans with work to do. The answer lies in part in understanding the basic principles behind automation. Principles found in history rather than hype and explained in my recent paper on automation in banking.

Tools – Human tool use began even before our species of humans existed. Even today, tool use is not unique to our species, and a variety of animals from the great apes to parrots use naturally occurring objects as hammers, drills, straws and many other types of tools. What identifies our species as having a special talent for automation has been finding ways to make tools that can be driven by forces other than our own physical strength.

Powered tools – Practical automation dates back only a few thousand years, with devices such as flour mills powered by wind or water. Early forms of automation were not restricted to the creation of tangible outputs such as flour. Almost as old as the water mill is the water clock, which provided data, i.e. the time of day. For most of recorded history, automation was limited to a very limited set of activities, powered by wind, water or animals. It was only with the industrial revolution that more sophisticated methods of generating power (i.e. steam engines) were used for automation and more sophisticated goods, such as fabrics produced by automated machines.

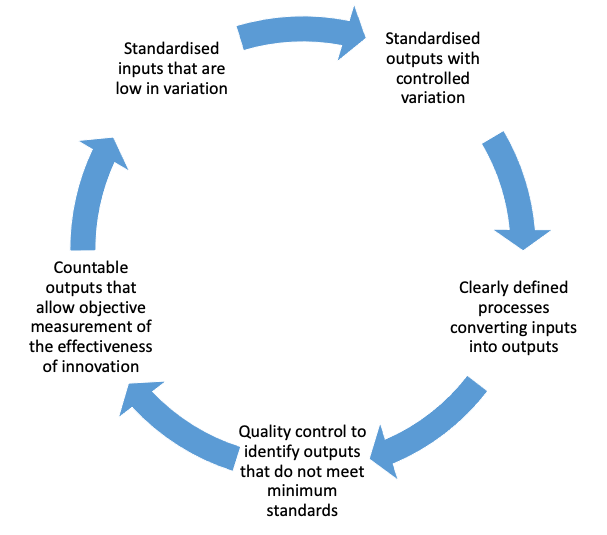

Division of labour – The acceleration of automation through the 18th–20th centuries was based on more than steam engines and the creation of more complicated machines. The concept of “division of labour” was identified by Adam Smith in “The Wealth of Nations” (1776). He used the example of a pin factory where manufacture was broken down into smaller and simpler tasks. Division of labour increased productivity through specialisation of labour but it also created tasks that were easier to automate.

Interchangeable parts – At the beginning of the 19th century, firearms manufacturers started creating weapons from parts that were easily interchangeable between guns of the same type. Today that may seem too obvious a thing but it was dependent on a great deal of work to increase the standardisation of parts. Without that standardisation, skilled craftsmen were needed to adjust parts in order to fit them together. Increasing standardisation meant much less skill was required to assemble products from their constituent parts. The resulting reduction in the complexity of assembly also made it easier to automate the process. It took decades, though, to standardise parts in arms manufacture sufficiently to make interchangeable parts cost-effective because it required major innovations in measuring tools and machine tools in order to reduce tolerances i.e. variations in the size and quality of parts.

Assembly lines – New forms of power for machinery (such as electric motors) made it possible to create assembly lines that linked together all the subtasks required for assembling even the most complicated goods. The Ford Motor Corporation assembly lines were dependent on all the innovations described earlier, but Henry Ford also made us of another technique that could better used with standardised inputs and outputs, experimentation (Hounshell, D. 1985, pp. 253-254).

The first attempt at line assembly in August 1913 was crude but phenomenally successful in increasing productivity. . . . Whereas the man-hour figure had been slightly under twelve and a half hours with static assembly, the first assembly line . . . reduced the figure to five and five-sixths man-hours. Experiments continued. On October 7, 140 Assemblers had been placed along a 150-foot line. Man-hour figures dropped to slightly less than three hours per chassis. . . . After Christmas, 191 men worked along the 300-foot line but pushed the assembly along by hand. Man-hour time increased rather than dropped. Sixteen days later, the engineers had installed a line on which the car was carried along by an endless chain. In the next four months, lines were raised, lowered, speeded up, slowed down. Men were added and taken off. . . . By the end of April 1914 . . . chassis assemblies worked out to ninety-three man-minutes.

If costs, time taken and manual effort involved were reduced as a result of an experiment, it was clear it had succeeded. Innovations that did not work were simply rejected. Over time, production lines were implemented across a whole range of industries, but a further spur to both productivity and the degree of automation came from improvements in design and costs of sensors and control systems. Improved control systems meant machines could act more autonomously, and fewer people were required to monitor production lines.

This provides us with the basic set of principles for effective automation, see below.

Figure 1. Principles for effective automation

In 1936, Alan Turing wrote a paper that described a ‘universal computing machine’. Unlike previous mechanical devices used for calculations, it was intended to automate calculations based on a set of instructions stored in electromechanical memory or, as we would call it today, a computer program. The truly novel aspect of universal computing machines was the possibility they created to use different programs to automate different types of calculations. The first of these stored programme computers was built in the 1940s. All computers since are based on the principle of a universal machine that could in principle be programmed to perform any task subject to having sufficient memory and processing power. Attach a computer to physical interfaces and control units and you have a programmable machine otherwise known as a robot.

Until recently the set of instructions that make up a program i.e. the algorithms, were always designed by people. This meant computers and robots struggled with situations that had not been anticipated by the programmer. This included work such as translation or analysing cardiograms. In terms of automation fundamentals, the problem was too much variation of inputs. The recent progress automating this type of work has had as much to do with cheaper computing power and the availability of huge amounts of data, as with technical advances in forms of AI such as “Deep Learning.” While the value is real it is all too easy to forget the limitations and imagine a near future where machines do everything. The advance of the machines has some bottlenecks. AI techniques have been shown to struggle or just fail because of

- A lack of understanding of context or any real intelligence

- The inputs in the form of historic decisions were based on biased or flawed behaviour

- Limitations in even more highly variable tasks such as creating entirely new computer programmes

Perhaps an even bigger problem during automation crazes is losing sight of the most fundamental principle. That automation is not an end itself but an option that makes sense if it can be demonstrated to be more cost effective than using humans. Henry Ford introduced considerable machinery into his manufacturing process, but he did not try to automate away people just for the sake of automation. This was a lesson ignored by Elon Musk who learnt the hard way in his attempts at “hyper-automating” the Tesla Model 3 plant. In 2018 he conceded, “Yes, excessive automation at Tesla was a mistake. To be precise, my mistake. Humans are underrated.” Well, some humans are underrated.

♣♣♣

Notes:

- This blog post expresses the views of its author(s), not the position of the Center for Evidence-Based Management, LSE Business Review or the London School of Economics.

- Featured image: Literary Digest 1928-01-07 Henry Ford Interview. Photographer unknown, Public domain

- When you leave a comment, you’re agreeing to our Comment Policy