Do institutions and academics have a free choice in how they use metrics? Meera Sabaratnam argues that structural conditions in the present UK Higher Education system inhibit the responsible use of metrics. Funding volatility, rankings culture, and time constraints are just some of the issues making it highly improbable that the sector is capable of enacting the approach that the Metric Tide report has called for.

Do institutions and academics have a free choice in how they use metrics? Meera Sabaratnam argues that structural conditions in the present UK Higher Education system inhibit the responsible use of metrics. Funding volatility, rankings culture, and time constraints are just some of the issues making it highly improbable that the sector is capable of enacting the approach that the Metric Tide report has called for.

This is part of a series of blog posts on the HEFCE-commissioned report investigating the role of metrics in research assessment. For the full report, supplementary materials, and further reading, visit our HEFCEmetrics section.

Last week, the long-awaited ‘Metric Tide’ report from the Independent Review of the Role of Metrics in Research Assessment and Management was published, along with appendices detailing its literature review and correlation studies. The main take-away is: IF you’re going to use metrics, you should use them responsibly (NB NOT: You should use metrics and use them responsibly). The findings and ethos are covered in the Times Higher and summarised in Nature by the Review Chair James Wilsdon, and further comments from Stephen Curry (Review team) and Steven Hill (HEFCE) are published. I highly recommend this response to the findings by David Colquhoun. You can also follow #HEFCEMetrics on Twitter for more snippets of the day. Comments by Cambridge lab head Professor Ottoline Leyser were a particular highlight.

I was asked to give a response to the report at the launch event, following up on the significance of mine and Pablo’s widely endorsed submission to the review. I am told that the event was recorded by video and audio so I will add links to that when they show up. But before then, a short summary record of the main points I made:

Read The Whole Report

The executive summary is good, but the whole report is a must-read if you are interested in having a deeper understanding of research culture, management issues and the range of information we have on this field. This should be disseminated and discussed within institutions, disciplines and other sites of research collaboration.

Process

I thought the overall report reflected a very balanced, evidence-led and wide-ranging review process which came to sound conclusions. I don’t say this lightly, having had serious questions about at least one previous policy review process in the sector. And I very much hope that a similar process is employed in terms of bashing out the questions over the mooted Teaching Excellence Framework (on which, no doubt, more posts to come).

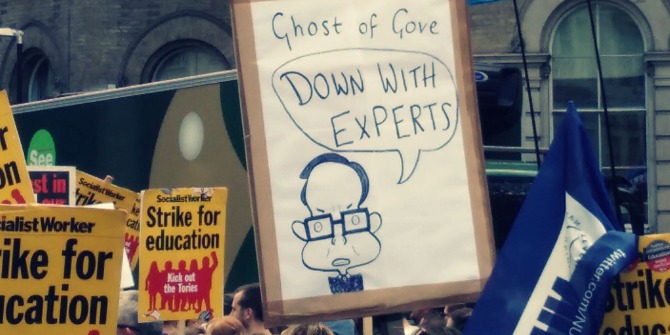

I mentioned that although the Review was well-executed, it is not clear that it was necessary to spend the funds on, given the extensive and recent research on the issue. Making policy on a ‘supply-driven’ (because the then Minister had met some altmetrics enthusiasts) rather than ‘demand-driven’ (based on what we think we want out of publicly funded research, and where it’s not happening) was a fundamentally misguided way to think about HE policy. We don’t want to keep revisiting questions at the behest of fads or soundbites; rather the best policy should be made with a clear picture (based on evidence, rather than political instinct) of what is actually going wrong or right.

No Metrics-Only Future?

As Colquhoun also notes, there is a key sentence buried on p.131:

No set of numbers, however broad, is likely to be able to capture the multifaceted and nuanced judgements on the quality of research outputs that the REF process currently provides (Report, p.131).

So, this is not only a case for waiting for the right indicators to eventually show up, but (as Stephen Curry notes) a sense that many of the things which are good about research are not amenable to capture in indicators based on citations.

Image credit: Drowning by Numbers by Jorge Franganillo (Flickr, CC BY)

Image credit: Drowning by Numbers by Jorge Franganillo (Flickr, CC BY)

This is an extension of the argument made in our previous paper, where we noted that citation-based indicators might indicate something about ‘significance’ but were unlikely to capture either ‘originality’ or ‘rigour’. This particular argument is also compatible with the findings of the Review’s correlational analysis, which showed a generally weak correlation between the results of REF2014 peer review and citation-based indicators.

Responsible Metrics: But Does the UK HE System Allow This To Happen?

In his intervention made directly prior to mine, David Sweeney, Director of Research, Education and Knowledge Exchange at HEFCE, noted that the findings of the review had reflected much of HEFCE’s thinking on the issue and argued that institutions and academics had a free choice in how they used metrics.

For the most part my remarks directly challenged this suggestion that institutions and academics were freely self-governing in the process of research assessment, arguing instead that many structural conditions in the present UK HE system would inhibit the responsible (meaning expert-led, humble, nuanced, supplementing but not substituting careful peer review, reflexive) use of metrics. A non-exhaustive list includes:

- Funding volatility: The attempt to create ‘excellence’ through competition means that universities are now subject to funding volatility, which makes them risk averse. Under such regimes, a reliance on ‘safer’ metric short-cuts is a way for them to manage and re-distribute risk. This is a direct consequence of the ways in which public funding is now delivered to universities in the UK.

- Lay oversight: The role of lay governing bodies and non-academics in managing and overseeing the university produces demands for easy digestible numerical representations of all kinds of research and teaching activity through spreadsheets and benchmarks – in managing funding volatility they are more likely to opt for the prioritisation of numerical indicators over other kinds of judgement.

- Rankings culture: The broader context of a culture driven in all areas by rankings and competition (including VC pay) ideologically prioritises that which can be demonstrated numerically, producing pressures further down the chain for delivery on widely-used metrics

- Non-expert internal review: Amongst colleagues, those without the relevant expertise in the institution to judge the work’s originality, significance and rigour, will tend to default to numerical indicators as a guide if they are increasingly made available to them.

- Time: Everyone in HE is currently pushed for time as we try to manage multiple competing demands and responsibilities, but specific time pressures apply to REF panels, hiring panels and promotion committees.

- Lack of similar experiences by those wielding the judgements: For early career researchers especially, the sense that those who are currently in high positions have not been subject to the same kinds of pressure means that they are making those judgements without a commensurate experience of being judged in that way

These issues come together to create structural conditions in which the ‘responsible use of metrics’ correctly advocated by the Review become highly improbable. The question is, can we change the broader systemic conditions and cultures to allow us to pursue this more responsible approach? And how?

This piece originally appeared on the Disorder of Things blog and is reposted with the author’s permission.

Note: This article gives the views of the author, and not the position of the Impact of Social Science blog, nor of the London School of Economics. Please review our Comments Policy if you have any concerns on posting a comment below.

Meera Sabaratnam (@MeeraSabaratnam) is currently Lecturer in International Relations at the School of Oriental and African Studies, University of London. Her work is primarily on issues of Eurocentrism and postcolonialism in international politics, with particular reference to the ‘liberal peace’. She received her PhD from the International Relations Department of the LSE in early 2012, and is a contributor to the global politics group blog The Disorder Of Things.

2 Comments