In less than a decade the impact agenda has evolved from being a controversial idea to an established part of most national research systems. Over the same period the conceptualisation of research impact in the social sciences and the ability to create and measure research impact through digital communication media has also developed significantly. In this post, Ziyad Marar argues that it is time to reinvigorate the debate on demonstrating social science research impact and to develop a language for talking about research impact that is unique to the social sciences.

In less than a decade the impact agenda has evolved from being a controversial idea to an established part of most national research systems. Over the same period the conceptualisation of research impact in the social sciences and the ability to create and measure research impact through digital communication media has also developed significantly. In this post, Ziyad Marar argues that it is time to reinvigorate the debate on demonstrating social science research impact and to develop a language for talking about research impact that is unique to the social sciences.

Over the past five years, discussion over the impact of social science research and how it is measured and understood has become increasingly complex, controversial and contested. A condition that can be succinctly summarised in three quotes:

As sociologist and Microsoft Research principal researcher Duncan Watts recently observed: “Measurement is a tremendous driver of science.” When you measure things, your conception of the issues – and therefore the solutions – changes. “Measurement,” he adds, “casts things in a completely different light.”

On the other hand, as the quote often attributed to Einstein goes, “not everything that can be counted counts, and not everything that counts can be counted.”

And finally we have Goodhart’s law (named after economist Charles Goodhart) often expressed as “when a measure becomes a target, it ceases to be a good measure.”

Put in other words, measuring and demonstrating social science research is political. Firstly, in the sense that measurement and the attribution of value can shape research practices themselves, but also in that the value they describe is inherently limited and if misapplied can lead to unintended consequences. SAGE Publishing has often had a role in this conversation in particular, as a convener of more expert voices.

Five years of progress

Five years ago, we published The Impact of Social Sciences with the LSE, the culmination of a three-year project focused on simply beginning to understand the impact of the social science enterprise in the UK. The next year, we published The Metric Tide, an independent assessment of the role of metrics in assessing research. Important though these studies were, in some ways they only represent the beginning of this conversation. For instance, The Metric Tide review was announced in April 2014 by the then Minister for Universities and Science, David Willets; the next Research Excellence Framework (REF 2021) will increase the weight it places on impact. Furthermore, entirely new impact measures, such as the Knowledge Exchange Framework (KEF), have also been introduced.

In the United States, claims that social science has no real impact have been used repeatedly in political attacks that attempt to cut social science funding – even as social science was being deployed effectively in questions of finance and national security that obsessed these same critics.

The National Science Foundation now also requires “broader impacts” to be explicit in the research it funds. Foundations increasingly require clear and direct demonstrations of impact from their grantees. Even the Pentagon has gotten into the game: Its Defense Advanced Research Projects Agency has tasked social scientists to create an artificial intelligence system, known as SCORE, to quantitatively measure the reliability of social science research and “thereby increase the effective use of [social and behavioural science] literature and research to address important human domain challenges.”

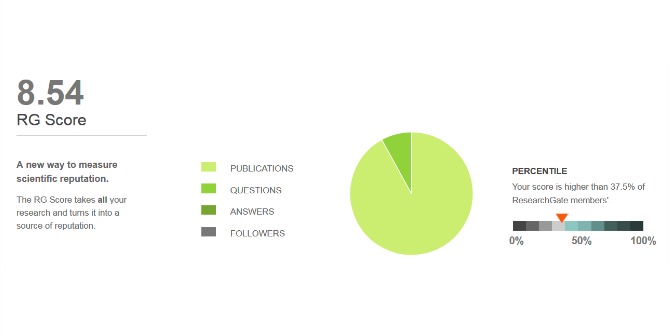

At the same time, an explosion of data has blown open new doors for social and behavioural research and pointed to many tools that could measure its impact. In 2014, Altmetric had only existed for two years; now this service and a range of other digital tools such as those provided by Clarivate and Google Scholar have become firm features in the research landscape.

However, despite these advances, a key unresolved issue in this debate is that no consensus has emerged within the social sciences, or beyond, as to how social science impact can be demonstrated. This void has allowed other disciplines to set the agenda for social sciences. A new survey of faculty at four U.S. universities from the Association of College & Research Libraries () finds that social science researchers are shifting their conceptions of demonstrating impact toward “ways more aligned with the Sciences and Health Sciences” (and away from those favoured in the arts and humanities).

This is problematic since impact measurements appropriate for astrophysics won’t read across well for anthropologists. Writing in Research Evaluation, the Italian National Research Council’s Emanuela Reale and her colleagues argue that the predominant methods used by natural scientists to demonstrate impact “tend to underestimate” the value of social science research because of time lags, methodological variety and social science’s interest in new approaches, rather than solely iterative ones.

Meanwhile, the same ACRL survey finds that across the academy, faculty are generally unaware of ways to measure impact apart from citations (in presumably high Impact Factor journals). This narrow understanding misses the value of alternative metrics, but also work outside a scholar’s discipline or beyond a single paper. Reale and her colleagues noted a similar “strong orientation of the scholars’ efforts towards considering scientific impact as a change produced by a single (or a combination of) piece(s) of research, with a limited interest in deepening conditions of the research processes contributing to generating an impact in the interested fields.” This evidence strongly suggests the need to return to previous discussions on demonstrating social science impact, but this time in a way that is inclusive of the scholarly community at large, as well as those outside of it.

Reopening the debate

Taking this into account, we are looking to reignite some of these debates and stimulate fresh thinking on the impact of social science. Earlier this year, SAGE assembled a working group to share ideas for helping scholars navigate the slippery concept of impact and the shortcomings of established metrics. As part of this effort we have produced a white paper highlighting the findings of this group, which is now available. The report maps out stakeholder categories, defines key terms and questions, puts forward four models for assessing impact, and presents a list of 45 resources and data sources that could help in creating a new impact model. It also establishes imperatives and recommended actions to improve the measurement of impact including:

- recognition from the community that new impact metrics are useful, necessary, and beneficial to society;

- establishing a robust regime of measurement that transcends but does not supplant literature-based systems;

- coming to a shared understanding that although social science impact is measurable like STEM, its impact measurements are unlikely to mirror STEM’s;

- and creating a global vocabulary, taxonomy, global metadata, and a global set of benchmarks for talking about measurement.

To keep the conversation alive for the longer-term, we have also developed a new impact section of the SAGE-sponsored community site Social Science Space. (This space itself is also being used to gather ideas, amplify diverse opinions, and engage in debate about impact with global actors engaged on the topic.) We are also hosting events to share progress and crowdsource ideas kicking off with a May event in Washington, DC and a June event in Vancouver, Canada.

About the author

Ziyad Marar is President of Global Publishing at SAGE where he has worked since 1989. He was appointed Editorial Director in 1997, Deputy Managing Director in 2006, and Global Publishing Director in 2010. In 2016, Marar was promoted to his current role where he has overall responsibility for SAGE’s publishing strategy. In recent years at SAGE, Ziyad has also focused on supporting the Social Sciences more generally. He has spoken and written on this theme in various international contexts and in early 2015 was appointed to the board of the Campaign for Social Sciences (CfSS). He also sits on the board of trustees for the UK academic news site, The Conversation. He tweets @ZiyadMarar

Image credit: Shalaka Gamage via Unsplash (Licensed under a CC0 1.0 licence).

Note: This article gives the views of the author, and not the position of the LSE Impact Blog, nor of the London School of Economics. Please review our comments policy if you have any concerns on posting a comment below.

High impact factors are taking the publication industry down as more and more scholars prefer to target high impact journals, playing a waiting game, instead of sending their papers to lesser known ones. So, there is a financial incentive for journal publishers to downplay impact factors or risk seeing their products limited to a few journal outlets.