AI and algorithmic decision-making tools already influence many aspects of our lives and are likely to become increasingly embedded within businesses and governments. Drawing on recent research and examples from across the social sciences, Frederic Gerdon and Frauke Kreuter outline where and how social science is vital to the ethical use of algorithmic decision-making systems.

We don’t have to look into the future to be concerned about negative social impacts of systems based on “artificial intelligence”. Algorithmic decision-making (ADM) systems are already having an impact on people’s lives and are used to make decisions in various areas from criminal justice and credit provision, to trying to identify social fraud. While these systems can facilitate various tasks in work and daily life, academic and journalistic investigations have repeatedly revealed adverse social impacts. These negative impacts are expected to grow as AI becomes more prevalent, underscoring the importance of paying attention to its social implications. Social scientists are particularly relevant for researching such impacts, due to their extensive expertise in societal matters and a wide range of methods available for conducting impact assessments.

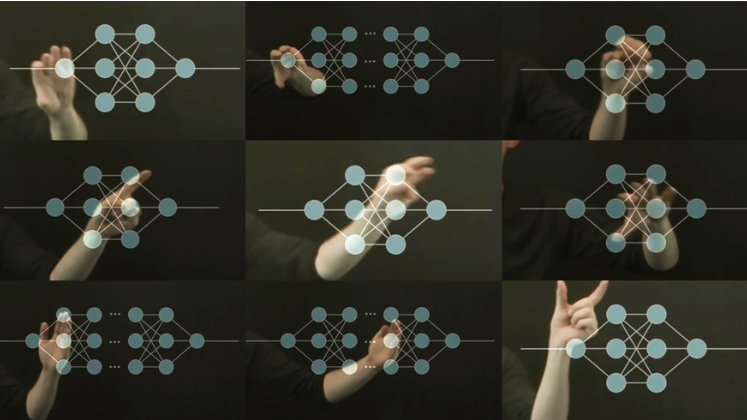

Algorithmic decision-making systems are complex and include multiple actors and processes. Based on a “big data” process model, we recently suggested to analytically break down the ADM process into three major components that require assessment (see Figure 1): (1) the data basis used, (2) data preparation and analysis, and (3) the actual implementation of a system and the consequences of algorithm-based decisions.

Each of these steps holds the potential for unfair, discriminatory, or otherwise undesirable social impacts stemming from ADM systems. These sources can be technical flaws or limitations, but importantly they can also stem from decisions made by humans involved in the process. In the following, we explore each of these three components of ADM and highlight ways in which social science can contribute expertise throughout.

Fig.1: Components of the ADM process, based on a “big data” process model.

Data basis

Data serves as the foundation for training (machine learning) algorithms. Since these algorithms rely on data to make predictions about individuals, it is crucial to ensure data is high quality and appropriate data is selected. Social sciences, such as survey research, have a strong history of evaluating measurement quality and data representativeness, especially concerning the inclusion of different demographic groups. However, even when the data quality is high from a technical perspective, the data source may still present an issue. This is particularly true when the data reflects unfair biases that are already prevalent in society. Social sciences have domain knowledge about such historical biases (e.g., against specific demographic groups) allowing them to contribute to the identification and mitigation of such biases.

Data preparation and analysis

Preparing the data for analysis involves making numerous decisions regarding recoding, grouping, deletion, and other transformations of variables and values. Such decisions can impact the outcomes of a study. Furthermore, when training the algorithm, it is vital to define how the algorithm should arrive at “fair” predictions. Developers or decision-makers have to choose from a wide range of available fairness definitions to ensure that the algorithm does not disproportionally disadvantage certain (demographic) groups compared to others. The choice of the most appropriate fairness definition may depend on context and should also take into account the views of the public, i.e., the individuals potentially affected by such systems. Social science can bring in knowledge of social contexts and of data collection methods (such as focus groups, qualitative interviews, and quantitative surveys) to address both latter points.

Implementation

Examining the actual effects of a decision on an individual and social groups is a fundamental endeavour within the realm of social science. In some cases, decision-makers interact with algorithmic recommendations, making knowledge about adoption or overriding crucial, as studied in the field of human-computer interaction. Moreover, social sciences can study how (particularly important) algorithmic decision-making systems affect groups of individuals and society at large, and it can even use simulation techniques to try to predict how the conglomerate of small individual decisions could add up to larger effects on the levels of social groups. Additionally, it is vital to assess whether the performance and fairness of an ADM system is higher or lower than alternative decision-making approaches (such as human decisions without any algorithmic assistance) for the specific decision task. The identification of such causal effects of ADM compared to other approached may require (ethically conducted) experimental work or other sophisticated study designs.

The involvement of social scientists is key to the development and implementation of (critical) ADM systems and to assess their potential negative effects on society. We have highlighted here areas in which social scientists can contribute with their substantive and methods knowledge to identify potential sources of social impacts along the whole ADM process. While such research is already being conducted, industry and the public sector could profit from using social scientific expertise more commonly in the development and implementation of ADM systems. However, it is important to note that this involvement does not justify the use of ADM for decisions that should never be taken by an algorithm (alone). Still, in areas where algorithmic decision-making appears in principle acceptable, social science can contribute to making these systems more equitable. Society benefits from such contributions that not only potentially mitigate unfairness, but also could increase a system’s acceptance and thereby allows us to better harness positive effects of algorithmic decision-making.

This post draws on the authors article published with Ruben Bach and Christoph Kern, Social impacts of algorithmic decision-making: A research agenda for the social sciences, published in Big Data and Society.

The content generated on this blog is for information purposes only. This Article gives the views and opinions of the authors and does not reflect the views and opinions of the Impact of Social Science blog (the blog), nor of the London School of Economics and Political Science. Please review our comments policy if you have any concerns on posting a comment below.

In text image reproduced with permission of the author. Featured Image Credit: Alan Warburton, © BBC, Better Images of AI, Virtual Human (CC-BY 4.0)