In his second article on the minimum wage, Alan Manning looks at the history of the policy since 1938, finding that the federal minimum remains relatively low compared to that in most other OECD countries. He writes that Presidential proposals, which began nearly a year ago, may see an increase in the minimum wage to over $10 an hour. He argues that such an increase would be even more effective if matched with greater utilization of the current Earned Income Tax Credit, which would help the poorest and, working together, would prevent benefits from being shifted to employers.

In his second article on the minimum wage, Alan Manning looks at the history of the policy since 1938, finding that the federal minimum remains relatively low compared to that in most other OECD countries. He writes that Presidential proposals, which began nearly a year ago, may see an increase in the minimum wage to over $10 an hour. He argues that such an increase would be even more effective if matched with greater utilization of the current Earned Income Tax Credit, which would help the poorest and, working together, would prevent benefits from being shifted to employers.

In his 2013 State of the Union address, President Obama called for a hike in the federal minimum wage to $9 an hour from $7.25 by the end of 2015. More recently, he has supported Democrat proposals to raise it even further to $10.10. With these proposals, there began a debate the United States has entertained three times in the past three decades. The battle lines are familiar. Most Democrats have lined up with the president, echoing his declaration that “no one who works full time should live in poverty.” Business lobbies, notably the U.S. Chamber of Commerce, are opposed – as are traditional Congressional Republicans. And the party’s newly vocal libertarian wing is of the same opinion.

But, unlike their representatives, Republican voters are divided: a Gallup Poll in March last year found that 50 percent of them supported the increase. Indeed, the minimum wage stands out as a policy typically associated with the left that commands support across the political spectrum: this concrete link between hard work and a living wage, it seems, is deeply ingrained in American’s sense of fairness. If the past is prologue (not a sure thing in gridlock-prone Washington) the outcome will be an increase in the federal minimum, likely to be phased in over a few years.

A two minute history

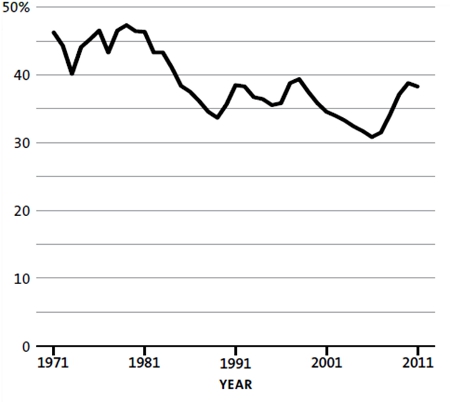

The federal minimum wage was introduced by New Deal Democrats in 1938 and initially set at 25 cents per hour. Because prices and market wages have risen so dramatically since then, this figure and subsequent increases don’t tell us much. It is more informative to express the minimum as a fraction of median hourly earnings – how much workers in the middle of the wage distribution earn. By that measure, the minimum wage reached its 40-year peak in 1979, when (by the calculation of the OECD) it equaled 48 percent of median earnings, as shown in Figure 1 below. Since then, there have been staged increases in 1980-81, 1990-91, 1996-97 and 2007-9. Each was quite large in nominal terms, which might lead to the conclusion that the minimum wage has been rising in real terms. But thanks to inflation, the minimum wage was only 38 percent of the median in 2011. If the $9 floor took effect immediately, the increase would return the minimum wage to about its level in 1979.

Figure 1 – The minimum wage as a percentage of the median wage in the U.S. 1971-2011

Source: OECD

States have the option to set their own minimums above the federal minimum, and 17 of them (typically, high-wage states) currently exercise this right. A few high-cost-of-living cities have chosen to do so, too; San Francisco has the highest rate in the nation, at $10.55 per hour.

Most highly industrialized countries have minimum wages (and the ones that don’t, like the Nordic countries, have something more or less equivalent integrated into collective bargaining). Indeed, the trend in recent years has been for more countries to introduce minimum wages – it is likely Germany will do so in the near future.

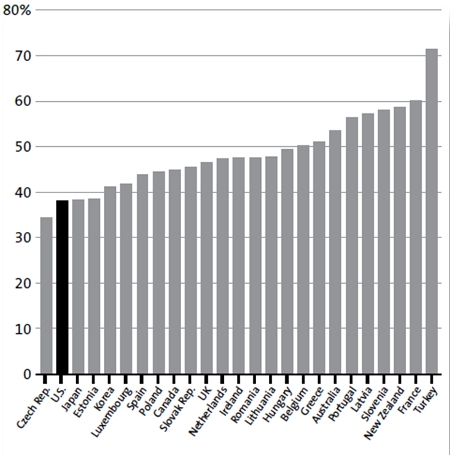

By OECD metrics, the U.S. federal minimum remains low compared to other countries, as Figure 2 shows. This does not necessarily mean it is relatively low in terms of purchasing power – the United States is a rich country, after all, with a per capita income well above that of all large European countries. In fact, the proposed increase would move the United States to the middle of the pack, still a long way behind France or New Zealand.

Figure 2 – The minimum wage as a percentage of the median wage, by country, 2011

Source: OECD

But there is one way in which the U.S. minimum does stand out in international comparisons: it does not vary with the worker’s age. There is one exception; employers can pay teenagers as little as $4.25 per hour. This option is hardly ever used, though, and, in any event, only applies to the first 90 days on the job. As a result, the federal minimum is very high in relation to market-driven youth earnings, running about 90 percent of median teenage earnings in 2012. By comparison, the minimum wage for 18- to 20-year-olds in Britain is about 80 percent of the adult minimum, while 16- and 17-year-olds are guaranteed just 60 percent. Thus, although Britain has a minimum wage for adults that is higher as a proportion of median earnings than the U.S. minimum, its youth minimum wage is lower. Comparisons with other OECD countries lead to parallel conclusions.

In his 2013 address, Obama also proposed to index the federal minimum to consumer prices, heralding a fade-out of the set-piece political battles every decade or so that usually result in large nominal increases in the minimum wage that are only enough to make up for ground lost to inflation. It’s hard to argue with indexing; if it’s a good idea to have a minimum wage in the first place, it surely is right to make it self-adjusting, rather than allowing it to fall at the caprice of the cost of living and then repair the damage with new legislation.

But, inevitably, it is the proposal to raise the minimum wage by a seemingly hefty 25 percent that has attracted the most attention. Supporters emphasize that the proposed hike would provide a much-needed income boost for poor families, while opponents focus on the worry that it would price some low-wage workers out of their jobs. Both sides cite a barrage of conflicting studies to support their positions – and understandably, anyone coming to the issue with an open mind is likely to leave bewildered.

Who gets the minimum?

Those who oppose the rise in the minimum wage conjure an image of the typical minimum-wage worker as a teenager or college student working for pin money and experience, a member of a household that is not poor and who can expect to earn far more than the minimum in later life. Those who support it don’t buy that idea. The White House’s 2013 fact sheet on the minimum wage emphasized that only one minimum wage worker in five is a teenager, reminding the undecided that a worker on the job 40 hours a week, 50 weeks a year earning the current minimum makes just $14,500. This is below the poverty threshold used by the Census Bureau for all households with more than one mouth to feed.

There is some truth to both images. Minimum-wage workers are more likely to be found in poor households. But the minimum is not tightly targeted: many minimum-wage workers are not from poor households, and many poor households do not contain minimum wage workers. Indeed, the poorest households are those with no one working, so increases in the minimum don’t do them any good.

Minimum wages or earned income tax credit? Yes.

If history holds true, then this political debate will result in some increase to the minimum wage, so it is perhaps more productive to focus what the increase would look like, rather than whether it should occur. Many economists, including many who support the minimum wage increase, would prefer to see greater utilization of tax-based subsidies for low-paying work, an approach embodied in the current Earned Income Tax Credit. That credit gives low-wage workers supplements to their earnings keyed to wage rates, hours worked and the numbers of dependents; the subsidies are gradually reduced as earnings increase.

Only poor households are eligible for the credit – teenagers working nights to save up for wheels need not apply – so it is a more direct, better-targeted tool for fighting poverty. Note the potential for slippage, though. An employer might be tempted to reduce the wages of workers who are getting supplements from Uncle Sam, in effect grabbing a share of the bounty intended to fight poverty.

In a highly competitive labor market, that wouldn’t be possible because victimized workers could move to other jobs. But if (as I have suggested in a previous post) competition among employers is not that intense, we would expect some of them to capture a portion of the Earned Income Tax Credit. Indeed, a recent study by Jesse Rothstein of Princeton concluded that about one-quarter of credit payments ended up in the pockets of employers.

However, a minimum wage can mimic the effects of competition in an imperfectly competitive labor market, preventing the credit’s benefits from being shifted to employers, as well as raising wages without reducing employment. So the minimum wage and the Earned Income Tax Credit are best seen as complements to each other, not as substitutes.

Apocalypse not

It seems very likely that the United States will end up with a $9 minimum wage in the not too-distant future. This will neither be the disaster its opponents apparently fear, nor the panacea its more enthusiastic supporters suggest. In fact, the academic and political energy expended on the issue amounts to overkill, in large part because it distracts from the issue of the imperfect competitiveness of labor markets. So the best part of the president’s proposals may well be the indexing of the minimum, ending the episodic need for legislation.

That will be bad news for people like me who earn fame and fortune – well, we try, anyway – by writing about the minimum wage and its critics. But it will be good news for low-wage workers in America, who will no longer be hostage to a debate long on ideology and short on facts.

This article is based on a paper from the Milken Institute Review.

Please read our comments policy before commenting.

Note: This article gives the views of the author, and not the position of USApp– American Politics and Policy, nor of the London School of Economics.

Shortened URL for this post: http://bit.ly/MalUhf

_________________________________________

Alan Manning – LSE Economics

Alan Manning – LSE Economics

Alan Manning is professor of economics at LSE and director of the communities programme in the Centre for Economic Performance at LSE.

I recently wrote an article about how an increase in the minimum wage rate increases unemployment. You can read it here: http://wp.me/p3N9zD-4e