While social media can be a great source of information and insight, it is also awash with misinformation. How can social media users combat this? In new research which focuses on health information, Emily Vraga finds that single tweets by social media users are ineffective at correcting false information, but they can be effective if they are followed by a response from an expert organization which has earned the public’s trust, such as the Centers for Disease Control.

While social media can be a great source of information and insight, it is also awash with misinformation. How can social media users combat this? In new research which focuses on health information, Emily Vraga finds that single tweets by social media users are ineffective at correcting false information, but they can be effective if they are followed by a response from an expert organization which has earned the public’s trust, such as the Centers for Disease Control.

The public increasingly turns to social media as a source of information about current events, science and technology, technology, health, and medicine. Yet the information shared on sites like Facebook and Twitter often does not come from expert sources and may not be vetted for accuracy. And with emerging health crises, even prominent news organizations can make mistakes, driven by pressures to break news stories and viewer expectations. As a result, misinformation proliferates and spreads on social media, potentially skewing public beliefs.

So what should you – as a social media user – do to combat misinformation on these platforms? Our research suggests that users should respond with correct information and links to expert sources. Expert organizations who have earned the public trust, such as the Centers for Disease Control (CDC), are even more effective: these experts may only need a single post to correct misinformation.

Such misinformation is especially concerning for health issues, as it can hinder effective public response. The Zika virus emerged as a global health concern in 2016, and although scientists reached consensus about its causes and effects, misinformation about the virus propagated on social media. One such rumor was that GMO mosquitos caused the Zika virus. This rumor directly contradicts one proposed solution to use GMO mosquitos to reduce mosquito populations and hinder the spread of mosquito-borne illnesses like Zika.

“Zika – Richmond VA” by Tom Woodward is licensed under CC BY SA 2.0

Our research involved showing a group of college undergraduates a simulated Twitter feed in the fall of 2016. Most participants saw an unknown individual in the feed sharing a news story from USA Today wrongly claiming that the Zika outbreak was caused by the release of genetically modified mosquitos in the US. We manipulated who responded to this story correcting the misinformation. Responses correcting this story either came from another Twitter user, from the CDC, or from both the CDC and another Twitter user. In a control condition, participants saw the same fake Twitter feed minus any reference to the Zika virus. We asked participants about their attitudes towards the causes of the Zika virus both before and after they saw the simulated Twitter feed, and compared its effects on these misperceptions about its cause.

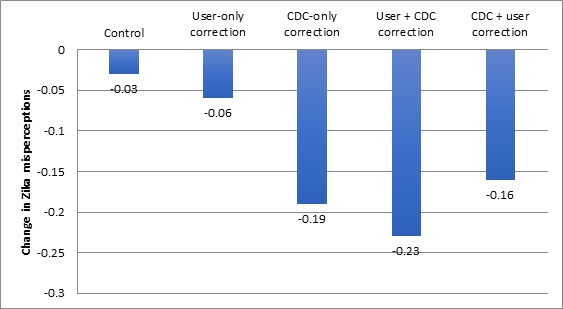

We found that a single anonymous Twitter user was not effective in reducing misperceptions, despite previous research suggesting two users both providing corrections can be effective. As Figure 1 shows, when the CDC responded saying that Zika was not caused by GMO mosquitos, participants reduced their belief in this misinformation compared to a control. In addition, the CDC adding their reply after another user reduced audience misperceptions further. In contrast, a user adding second reply after the CDC had corrected the incorrect story was not helpful.

Figure 1 – Changes in Zika misperceptions by correction type

In fact, when a user added a second correction after the CDC’s response, it created confusion among those who originally did not believe GMO mosquitos caused Zika to spread. We suspect that the seemingly-credible source of USA Today created misperceptions among this group, even when they remember the correction coming from a user, even with a link.

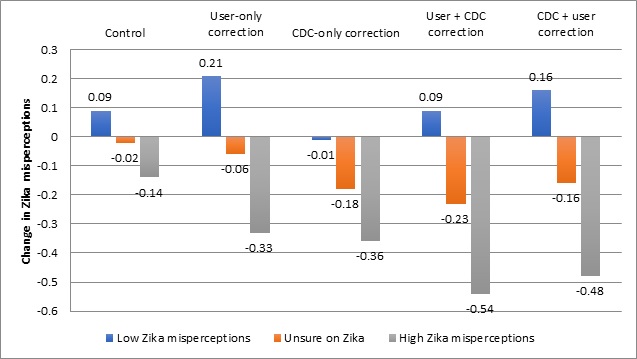

Overall, however, the responses were effective in reducing misperceptions among the group most at need of correction: those who initially believed GMO mosquitos were to blame for the spread of the Zika virus. As Figure 2 shows, when these individuals saw a correction from the CDC – and especially from both the CDC and another user – they were less likely to continue to believe the false information.

Figure 2 – Changes in Zika misperceptions by correction type and level of misperception

Importantly, the CDC’s response to the Twitter post correcting the misinformation did not influence its credibility. The public generally respects the CDC. Correcting misinformation on Twitter did not harm their credibility, even among groups initially inclined to believe the misinformation.

Before offering conclusions, we must recognize the limitations of our study. First, our results come after participants view an artificial Twitter feed, rather than their own feed. People may respond differently if they see their own friends or connections being corrected. However, the potential for seeing an exchange between unknown others – especially when using a hashtag like #zika – remains high. We also look at corrections of false information for a seemingly credible source – a news article from USA Today. We expect the effect of expert correction will be stronger when the original source is less credible.

Overall, both individuals and experts should be correcting misinformation when they see it on Facebook or Twitter. Doing so creates opportunities for what we call observational correction, when social media users update their own attitudes after witnessing another user being corrected. Misinformation is often “sticky,” but immediate corrections of misinformation on social media should prevent false beliefs from being accepted by the public. Users should offer multiple corrections using links to expert sources, but should desist from adding another response after an expert organization like the CDC has corrected the misinformation. Experts should consider monitoring controversial topics and responding immediately to misinformation with accurate content. Correcting misinformation on social media is ultimately everyone’s responsibility.

- This article is based on the paper, ‘Using Expert Sources to Correct Health Misinformation in Social Media’, in Science Communication.

Please read our comments policy before commenting.

Note: This article gives the views of the author, and not the position of USAPP – American Politics and Policy, nor the London School of Economics.

Shortened URL for this post: http://bit.ly/2y6qBWg

_________________________________

About the author

Emily K. Vraga – George Mason University

Emily K. Vraga – George Mason University

Emily K. Vraga is an assistant professor in the Department of Communication at George Mason University. Her research focuses on how individuals process news and information about contentious political, scientific, and health issues, particularly in response to disagreeable messages they encounter in digital media environments. She is especially interested in testing methods to limit biased processing, to correct misinformation, and to encourage attention to more diverse content online. For more information, please visit her website at http://emilyk.vraga.org/