Creating the innovations that drive total factor productivity (TFP) growth takes both ideas and firms that process those ideas into new products or techniques. However, while economic theory and innovation policy focus upon idea supply alone, it is idea processing capability that drives US TFP growth. Kevin R. James, Akshay Kotak, and Dimitri Tsomocos write that understanding and improving the economy’s idea processing capability must be a core element of an effective growth strategy.

Creating the innovations that drive total factor productivity (TFP) growth takes both ideas and firms that process those ideas into new products or techniques. However, while economic theory and innovation policy focus upon idea supply alone, it is idea processing capability that drives US TFP growth. Kevin R. James, Akshay Kotak, and Dimitri Tsomocos write that understanding and improving the economy’s idea processing capability must be a core element of an effective growth strategy.

An idea is not an innovation, an idea processed into a new product or technique is an innovation. Robert Fleming, for example, famously discovered penicillin in 1928, but it took years of practical engineering by pharmaceutical firms during the 1930s and 1940s to process that idea into an effective drug. Yet current economic theory — and so innovation policy — focuses almost entirely upon idea supply and largely ignores idea processing capability all together. This focus is misplaced.

In a new working paper, we develop a theory of TFP growth in which both idea supply and idea processing capability play a central role. We find that the disastrous fall of average annual US TFP growth from 1.9% over 1951/1969 to 0.8% over 1980/2019 is due to a decline in the economy’s idea processing capability, not a shortage of ideas. And if idea supply isn’t the problem, then increasing idea supply by rounding up the usual suspects of higher R&D spending and easing immigration restrictions for STEM workers (etc.) isn’t the solution. Fixing low TFP growth requires a different set of tools.

Is growth over?

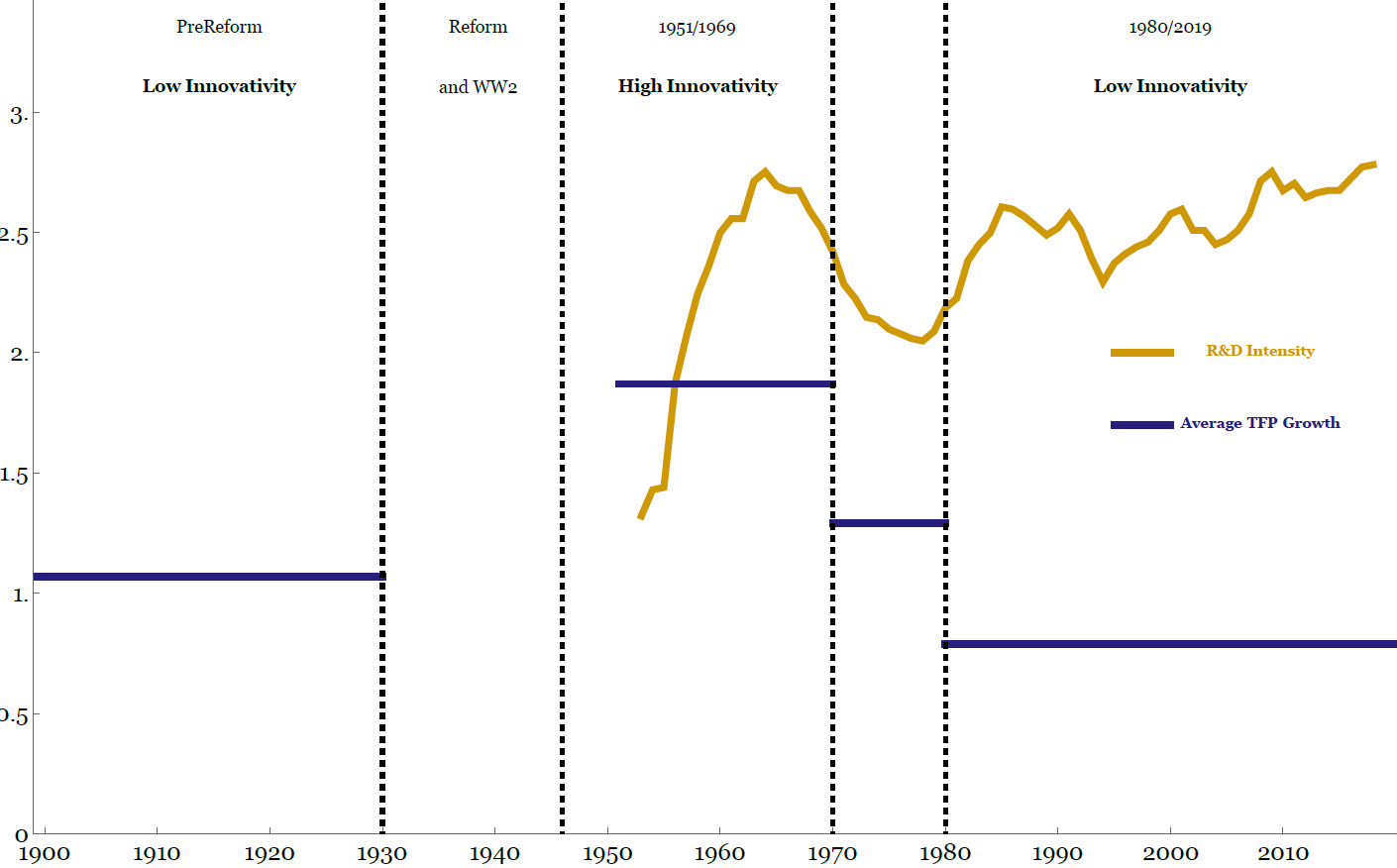

The empirical facts on TFP growth are not in dispute. Since the late 1960s, TFP growth has declined sharply despite a significant increase in R&D intensity (R&D spending as a percentage of GDP). The puzzle is why.

Figure 1 – Innovativity, TPF growth, and R&D intensity

Sources for TFP Growth: Bakker, Crafts, and Woltjer (2019) and San Francisco Federal Reserve. Source for R&D: NSF.

Endogenous Growth Theory provides the standard framework to analyse this question. This theory begins by assuming that ideas drive TFP growth. Idea supply, in turn, is determined by a combination of the cost of finding ideas and the resources devoted to finding ideas (R&D intensity). So, the fact that TFP growth is falling means that idea supply is falling. If idea supply is falling while R&D intensity is rising, then it can only be (in this framework) because the cost of finding ideas is going up.

If this is the case, then we will need to spend ever more on finding ideas just to maintain TFP growth at its current abysmal level. Indeed, Bloom, Jones, Van Reenen, and Webb estimate that standing still on TFP growth will require R&D intensity to double every 13 years. Doubling R&D intensity from current levels will cost over $600 billion per year in the US and over $50 billion per year in the UK. Plainly, this isn’t going to happen. If R&D intensity doesn’t double every 13 years — and assuming that endogenous growth theory is the right framework to analyse this question — then TFP growth is going to slow still further.

This line of thinking thus naturally leads to Robert Gordon’s enormously influential conjecture that “economic growth was a one-time-only event” that is now over.

But is endogenous growth theory the right framework?

Innovativity

We propose a new innovativity framework, where innovativity is the economy’s ability to produce the innovations that drive TFP growth. Innovativity depends upon both idea supply (as in standard endogenous growth theory) and idea processing capability. So, the evolution of average TFP growth over time is driven by changes in the binding constraint on innovativity. And this constraint could be either idea supply or idea processing capability.

Based upon studies of the innovation process in science and industry, we posit that an economy’s idea processing capability increases with the proportion of firms that choose long-horizon innovation strategies (think Bell Labs in its glory years) rather than short-horizon quick-win strategies.

A firm chooses the strategy that financial markets reward. When financial markets work well —useful and accurate financial reporting, clean and transparent markets, etc. — firms can credibly engage with key stakeholders to pursue long-horizon strategies. When markets work poorly, firms lack that ability and so pursue quick-win strategies that demand less commitment from key stakeholders instead. The economy’s idea processing capability therefore increases with financial market effectiveness.

Our innovativity framework produces an empirical measure of innovativity based upon financial market data that is completely independent of the TFP data itself. If idea processing capability (rather than idea supply) is the binding constraint on TFP growth, then: a) changes in market effectiveness will drive changes in innovativity; and b) changes in innovativity will in turn predict changes in average TFP growth. This is precisely what we find.

The financial market reforms of the 1930s/1940s significantly improved financial market effectiveness relative to the de facto unregulated Pre-Reform period, shifting innovativity from its Low state into the High state for 1951/1969. We find that financial market effectiveness declined after the 1960s, pushing innovativity back to its Pre-Reform Low state for the 1980/2019 period. As predicted, average TFP growth tracks innovativity.

Of course, the down/up/down pattern of average TFP growth over the last 120 years is well known in an empirical sense. But, to the best of our knowledge, our innovativity analysis is the first that both predicts this pattern and predicts the transition dates between TFP growth regimes.

So, it is plausibly the case that the evolution of average US TFP growth over the last 120 years is due to variations in idea processing capability rather than idea supply. Our analysis suggests in particular that the economy’s poor record on TFP growth after 1980 is due to a decline in the economy’s idea processing capability brought about by a fall in financial market effectiveness. These results have significant implications for innovation policy.

We need a new drug

If the fall in average TFP growth is due at least in part to a decline in financial market effectiveness, then an effort to improve financial market effectiveness should be a key component of growth strategy. Yet, the financial market regulatory regime that grew out of the 1930s/1940s reforms is still there and functioning (on its own terms). So, is there actually any scope to improve market effectiveness?

Our analysis implies that the current financial regulatory regime just doesn’t work as well as it used to from the perspective of the economy as a whole. Like penicillin, the 1930s/1940s reforms worked brilliantly for a time. But eventually the targets developed resistance.

So, if our analysis is correct, we need new reform drugs that work for markets and firms as they are now.

On the other hand, we could be wrong. That said, in comparison to the billions of dollars per year that it will take to move the dial on R&D intensity and the disruptions that significantly relaxing immigration restrictions for STEM worker will inevitably entail, it will cost nothing to find out. And, again, it is far from certain that spending tens (or hundreds) of billions of dollars per year to improve idea supply will solve the low TFP growth problem as it is not clear that idea supply is even the issue.

So, exploring and developing policies to improve the economy’s idea processing capability has an enormous upside and not much downside.

The end of TFP growth condemns us to a dismal neo-Malthusian future. The next UK government and the next US administration need an economic strategy to avoid this catastrophe. Rolling the dice on a major effort to improve the economy’s idea processing capability must be part of it.

- This blog post is based on the working paper Ideas, Idea Processing, and TFP Growth in the US: 1899 to 2019. and first appeared at LSE Business Review.

- Featured image by Jezael Melgoza on Unsplash

Please read our comments policy before commenting

Note: The post gives the views of its authors, not the position USAPP– American Politics and Policy, nor of the London School of Economics nor the IMF, its executive board, or its management

Shortened URL for this post: https://bit.ly/3zKutc8

About the author

Kevin R James – LSE Systemic Risk Centre

Kevin R James – LSE Systemic Risk Centre

Kevin R James is a co-investigator in the Systemic Risk Centre at LSE. He is leading a project on Rebooting Financial Regulation which aims to improve economic growth and enhance financial stability by increasing financial market effectiveness. Twitter: @kevinrogerjames

Akshay Kotak – LSE Systemic Risk Centre

Akshay Kotak is a research associate in the Systemic Risk Centre at LSE, and lead economist at the Information and Communications Technology Council (ICTC) of Canada. His research interests include financial intermediation, financial market effectiveness, and the economic impacts of digital technology. Email: a.kotak@lse.ac.uk

Dimitrios P Tsomocos – Said Business School and St. Edmund Hall, University of Oxford

Dimitrios P Tsomocos – Said Business School and St. Edmund Hall, University of Oxford

Dimitrios P Tsomocos is a professor of financial economics and fellow, Said Business School and St. Edmund Hall, University of Oxford. His research focuses upon financial fragility, macroprudential policy, and banking in general equilibrium. Email: dimitrios.tsomocos@sbs.ox.ac.uk