While optimism can inspire efforts to connect the spheres of science, policy, and practice, it does little to remove the real boundaries between them. Systematic investigation of “bright spots” – or success stories – would likely yield some interesting learning points but, as David Christian Rose suggests, it may be unwise to cherry-pick evidence of what works by only analysing success stories. What’s more, it seems unlikely that further study would throw up any truly novel solutions to how evidence is used in policy and practice. Instead, focus should shift to overcoming institutional barriers that are preventing progress, such as a lack of incentives and training.

While optimism can inspire efforts to connect the spheres of science, policy, and practice, it does little to remove the real boundaries between them. Systematic investigation of “bright spots” – or success stories – would likely yield some interesting learning points but, as David Christian Rose suggests, it may be unwise to cherry-pick evidence of what works by only analysing success stories. What’s more, it seems unlikely that further study would throw up any truly novel solutions to how evidence is used in policy and practice. Instead, focus should shift to overcoming institutional barriers that are preventing progress, such as a lack of incentives and training.

A recent Nature Communications article by Chris Cvitanovic and Alistair Hobday, also featured on the Impact Blog, argued for more optimism at environmental science-policy-practice interfaces, as well as for the systematic study of bright spots to highlight success factors. On the former point, there is certainly a place for optimism. Indeed, this reflects the thrust of many studies, for example, in nature conservation that seek to identify where science has influenced policy, as well as highlighting the power of good news stories (see also, Conservation Optimism). Optimism can galvanise researchers to engage with decision-makers (e.g. policy officials, practitioners, stakeholders) with a positive and determined attitude. Continued engagement, rather than giving up too easily, is important, and if researchers (especially at early-career stages) feel more empowered to engage with decision-makers in the first instance, then this can only improve the likelihood of impact.

There are also likely to be small gains in learning from such “bright spots”. Indeed, lessons have already been learned from many studies that have analysed cases of policy impact, which has led to the identification of several principles of good policy engagement. To a certain extent, success factors, such as the use of knowledge brokers, good presentation of knowledge, and many others listed by Cvitanovic and Hobday, may be applicable in a variety of contexts and improve the chances of a successful outcome.

However, does such an approach offer false hope to the environmental science community, who may think that through renewed optimism and the systematic study of bright spots, we are suddenly going to find the silver bullet to good policy engagement? Now, that is an extreme claim, and not, it must be said, one made by Cvitanovic and Hobday. Yet, their article does nevertheless call for the systematic study of bright spots to identify successful principles of engagement. The “systematic study” suggests the need for significant resources. Presumably the authors intend for a new research agenda to be implemented across many universities and science-policy-practice interfaces, and the detailed documentation of successes. Based on this significant investment, it is submitted that the authors must think that gains will be more, or at least equally, significant than the inputs.

I argue this is unlikely for three main reasons, all rooted in policy work that has investigated the messy politics of evidence use: firstly, it is notoriously difficult to delineate where evidence has been influential; secondly, policymaking is context-specific; and thirdly, many studies of impact have already identified success factors, which are repeated without apparent progress in evidence use. With these reasons in mind, there is little justification for a systematic study of bright spots, but rather for more focus on implementing actions.

Optimism doesn’t make boundaries disappear

Before making these points, it is worth critically reflecting upon the power of optimism. I agree with the authors’ contention that optimism can be helpful. It can galvanise researchers to engage, motivate them to sustain engagement, persevering even in periods of slow uptake. Telling optimistic stories to policymakers and the public is better than the constant narration of doomful scenarios, particularly in the context of environmental change.

But that is about as far as optimism goes. All the optimism in the world doesn’t change the fact that there is indeed a “gap” between science, policy, and practice. Although boundaries between science, policy, and practice are fluid – and certainly not as clear-cut as Margaret Thatcher’s contention that “scientists advise and ministers decide” – these spheres are obviously not the same. Gieryn’s seminal article on boundary work eloquently shows the differences between science and a whole host of other spheres, such as politics and religion. Infinite amounts of positive thinking will not change this fact. Nor is it envisaged that an optimistic mindset would overcome other barriers, such as the lack of interest in science from some policymakers. In my experience, I have always approached policymakers with optimism, yet have still faced many cases where the interested in engaging is not reciprocated. My point here is simple – while we should approach interactions with policymakers and practitioners with optimism, we should not expect it to work miracles or remove barriers that are actually present.

Selecting case studies of success – a real challenge

It is unclear that the call for a “systematic study of bright spots” to develop effective strategies for policy engagement will yield sufficient novel advances to justify the necessary resources. The study of success stories in the policy sciences is nothing new; prominent policy scholars, such as Kingdon, Baumgartner and Jones, and Owens, have long been interested in why certain ideas, including the evidence underpinning them, come to influence policy. They are also interested in understanding why evidence does not influence policy, which is just as enlightening, and there seems little justification for cherry-picking evidence only from cases of success.

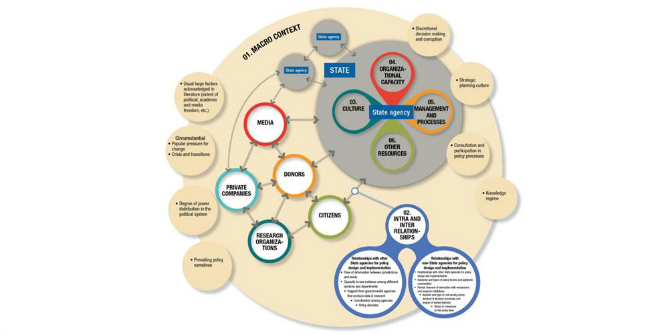

The real difficulty in documenting examples of success lies in the criteria used to select those case studies. Owens eloquently illustrates that scientific evidence impacts policy over varying timescales – occasionally quickly, more often quite slowly, sometimes never (Figure 1). The challenge for policy scholars, however, is to account for the “atmospheric” or diffuse impact of scientific evidence over time (see Owens, 2015). It is not always possible to identify whether scientific evidence has been influential, never mind how and why it was impactful (Owens’ so-called “invisible levers”). How will studies of bright spots decide which cases constitute success and how will they trace how evidence comes to influence policy over the course of longer timescales? Are we only interested in the “direct hits”? If so, doesn’t this underplay the important, “creeping” role of knowledge? Are we not interested in cases of no impact? Tracing diffuse impacts is further difficult since many studies of environmental science-policy interfaces rely on surveys, interviews, and documentary analysis, rather than long-term ethnographies. Thus, accounting for serendipitous moments in the policy process, for example an important coffee meeting between key actors, is almost impossible.

Figure 1: Scientific evidence can influence policy over varying timescales (based on Owens, 2015). “Direct hits”, where science quickly influences policy; “dormant seeds”, in which science appears to be initially ignored but is actually influential at a later date; long-term diffuse impacts can occur when the policy landscape shifts in quite a radical way over a long timescale. It is often impossible to point to any one piece of scientific evidence which led to such a shift.

Figure 1: Scientific evidence can influence policy over varying timescales (based on Owens, 2015). “Direct hits”, where science quickly influences policy; “dormant seeds”, in which science appears to be initially ignored but is actually influential at a later date; long-term diffuse impacts can occur when the policy landscape shifts in quite a radical way over a long timescale. It is often impossible to point to any one piece of scientific evidence which led to such a shift.

Context-specificity

The messiness of science-policy-practice interactions links to the second point on the context-specificity of policymaking. Policymaking is serendipitous, often reliant on interactions between a few key actors, and susceptible to external influences which create windows of opportunity or non-opportunity. How do we know success stories aren’t outliers caused by chance events? Since policy and practice relies on the decisions of different sets of policymakers in each place, the rules of the game are always different, and rarely predictable. A list of key principles for policy engagement is never going to guarantee success when applied to specific cases.

Haven’t we been here before?

My third and final point refers to the fact that many scholars of science-policy-practice interfaces, including myself, have used success stories to identify solutions aimed at improving the chances for evidence-informed policymaking. This has included the construction of frameworks populated with principles geared to successful engagement with decision-makers. What is clear from the literature, however, and particularly from a recent global study of barriers and solutions to evidence use in policy, is the regularity with which the same principles are recommended; e.g. building trust, good knowledge exchange. An assessment of the last decade of work on environmental science-policy interfaces will show the same solutions being proposed. Exactly the same solutions, in fact, repeated time and time again. In terms of proposing solutions, we have not moved very far, and thus we would be unlikely to find many more novel solutions to justify the effort of systematic study.

Action is needed, not more study

Rather than reinventing the wheel through a systematic study of bright spots, which is likely to highlight the same key principles of policy engagement, we should work to put the wheels on the vehicle of change. As a recent global study argued, the reason why there is a so-called science-policy-practice gap is not because actors in different spheres disagree on solutions to the problem, but rather that there are barriers to action. These are mainly institutional, for example there are a lack of incentives and resources for scientists to engage with policymakers. Lack of incentives, and training, prevents some scientists from presenting knowledge in a policy-relevant way, for example in the form of systematic summaries of what works, which policymakers want. Funding calls rarely prioritise knowledge that is likely to impact on policy. On the other hand, policymakers and practitioners are rarely trained to understand science, and most lack the time for the sustained engagement (as do researchers) required to co-develop research questions with the research community.

If we addressed institutional barriers to progress, then tangible benefits will result. Important steps include increasing co-location of staff across spheres, reforming academic incentive structures to improve policy skills and engagement, funding policy support staff in academic departments, making evidence more easily accessible, and improving the capacity for policymakers and practitioners to communicate relevant priorities to researchers. All of these necessary reforms, amongst many more, require action. We already know what to do to improve interactions in environmental science-policy-practice interfaces and should optimistically work together to implement these solutions.

Note: This article gives the views of the author, and not the position of the LSE Impact Blog, nor of the London School of Economics. Please review our comments policy if you have any concerns on posting a comment below.

Featured image credit: Andrej Lišakov, via Unsplash (licensed under a CC0 1.0 license).

About the author

David Christian Rose is a Lecturer in Geography at the University of East Anglia. He is interested in improving the use of evidence in policy and also in the responsible innovation of new agricultural technologies. He is soon to start working with Defra on co-designing agricultural policy post-Brexit. His ORCID is 0000-0002-5249-9021.