As we are at the cusp of technological change, history shifts its pace when touched with scientific vision. Artificial Intelligence (AI) is one such technical field that is transforming human society into one of robots and machines. AI includes machine learning, natural language processing, big data analytics, algorithms, and much more. However, as human intelligence is marked by intrinsic bias in decision-making, such characteristics can also be found in AI products that work with human-created intelligence. These phenomena of bias and discrimination – rooted in a cluster of technologies and embedded in social systems – are a threat to universal human rights. Indeed, AI disproportionally affects the human rights of vulnerable individuals and groups by facilitating discrimination, thus creating a new form of oppression rooted in technology.

AI as a tool of discrimination

With the advancement of AI in our organic societies, the issue of discrimination and systemic racism has taken increasing space in political debates about technological growth. Article 2 of the UDHR and Article 2 of the ICCPR both articulate individual entitlement to all rights and freedoms without discrimination. Of course, this is difficult to apply in practice when considering the multitude of discriminatory opinions and oppressive practices that characterise human interaction. Though some naively perceive AI as the solution to this challenge, a technological tool that frees us from the bias of human decision-making, such opinions fail to account for the traces of human intelligence in AI technology.

Indeed, AI algorithms and face-recognition systems have repeatedly failed to ensure a basic standard of equality, particularly by showing discriminatory tendencies towards Black people. In 2015, Google Photos, which is considered an advanced recognition software, categorized a photo of two Black people as a picture of gorillas. When keywords such as ‘Black girls’ were inputted into the Google search bar, the algorithm showed sexually explicit material in response. Researchers have also found that an algorithm that identifies which patients need additional medical care undervalued the medical needs of Black patients.

Facial-recognition technology is now being adopted in the criminal justice systems of different states – including Hong Kong, China, Denmark and India – to identify suspects for predictive policing. Sceptics have pointed out that instead of mitigating and controlling police work, such algorithms instead enhance pre-existing discriminatory law enforcement practices. The unevaluated bias of these tools has put Black people at bigger risk of being perceived as high-risk offenders, thus further entrenching racist tendencies in the justice and prison systems. Such racial discrimination inherited in AI disgraces its transformative implementation into society and violates equal treatment and the right to protection.

While communities are now standing up for the rights of Black people through the Black Lives Matter movement, the increased implementation of AI in society is fostering digital bias and replicating the harm that is currently being fought against. In that sense, this technology disproportionately affects the vulnerable by exacerbating discriminatory practices already present in modern society.

Technology as a source of unemployment

The right to work and protection against unemployment is guaranteed under Article 23 of UDHR, Article 6 of ICESCR, and Article 1(2) of the ILO. Though the rapid increase of AI has transformed existing businesses and personal lives by improving the efficiency of machinery and services, such change has also birthed an era of unemployment due to the displacement of human labour. Referring to the exponential growth in technology, Robert Skidelsky writes in his book Work in the Future that “Sooner or later, we will run out of jobs”. This was also reiterated in a seminal study conducted by Oxford academics Carl Frey and Michael Osborne, which estimated that 47% of U.S. jobs are at risk of future automation due to AI.

In 2017, Changying Precision Technology, a Chinese factory producing mobile phones, replaced 90% of its human workforce with machines, which led to a 250% increase in its productivity and a substantial 8% drop in defects. Similarly, Adidas has moved towards ‘robot-only’ factories to improve efficiency. Thus, business growth no longer relies on a human workforce; in fact, human labour may negatively affect productivity. Until now, technology has had a more detrimental effect on low and middle-skilled workers, with decreasing employment opportunities and falling wages, leading to the emergence of job polarisation. However, as technology continues to advance, many jobs that we would today consider protected from automation will eventually be replaced by AI. For example, AI-based virtual assistants software such as Siri, Cortana, Alexa and Google, have steadily replaced personal assistants, foreign language translators, and other services that were previously reliant on human interaction.

The COVID-19 pandemic has already impacted millions of jobs, and a new wave of AI revolutions may further aggravate the situation. By increasingly introducing AI in different job sectors, it seems that the poor will become poorer and the rich will become richer. Indeed, AI represents a new form of capitalism that strives for profit without the creation of new jobs; instead a human workforce is perceived as a barrier to growth. There is thus an urgent need to address the consequences of AI on social and economic rights, through the development of a techno-social governance system that may protect the employment rights of humans in an AI era.

Controlling populations and movement

Freedom of movement derives itself from many international declarations and has been recognized as a fundamental individual right by many countries. AI’s ability to limit this right is specifically related to its usage for surveillance purposes. A report from the Carnegie Endowment for International Peace pointed out that at least 75 of 176 countries globally are actively using AI for security purposes, such as border management. There have been concerns regarding the disparate impact of surveillance on populations that are already discriminated by police – such as Blacks, refugees and irregular migrants – as predictive policing tools end up factoring in “dirty data” reflecting conscious and implicit bias. The Guardian reported that to keep a check on illegal immigration, dozens of towers equipped with feature laser-enhanced cameras were installed at the US-Mexico border in Arizona. In addition to this, the US government deployed a facial recognition system to record images of people inside vehicles entering and leaving the country.

Technological shifts have also impacted the military and humanitarian sectors. The increasing use of armed drones in warfare – in particular by the US in Pakistan and Afghanistan – has been repeatedly denounced as a violation of International Humanitarian Law in a 2010 UN report. An investigation by The Intercept of US military operations against the Taliban and al Qaeda in the Hindu Kush revealed that nearly nine out of ten people who died in drone strikes were not the intended targets. The rapid development of autonomous technology and AI has also resulted in fully autonomous weapons such as “killer robots”, which raise a host of moral, legal, and security concerns. The lack of ethical judgment of such machines has raised concerns about the reliability and error in judgement of these weapons, which might result in accidental deaths and the rapid escalation of conflicts. Indeed, Zachary Kallenborn’s article highlights the incapability of these weapons to discriminate between combatants and non-combatants.

Moreover, the advent of ‘humanitarian drones’ – for which military technology may be used for humanitarian purposes – has raised ethical dilemmas in regards to how this technology may negatively affect populations in need. There are decidedly adverse consequences for vulnerable groups, whose personal data has put them at further risk of violence. Biometrics has been used to register refugee populations with the UNHCR; while it is assumed to be an objective identification method, there is ample evidence that these tools simply codify discrimination. For example, biometric data collected from Rohingya refugees in India and Bangladesh was used to facilitate their repatriation rather than to integrate them into society, further exacerbating the suffering experienced by this community.

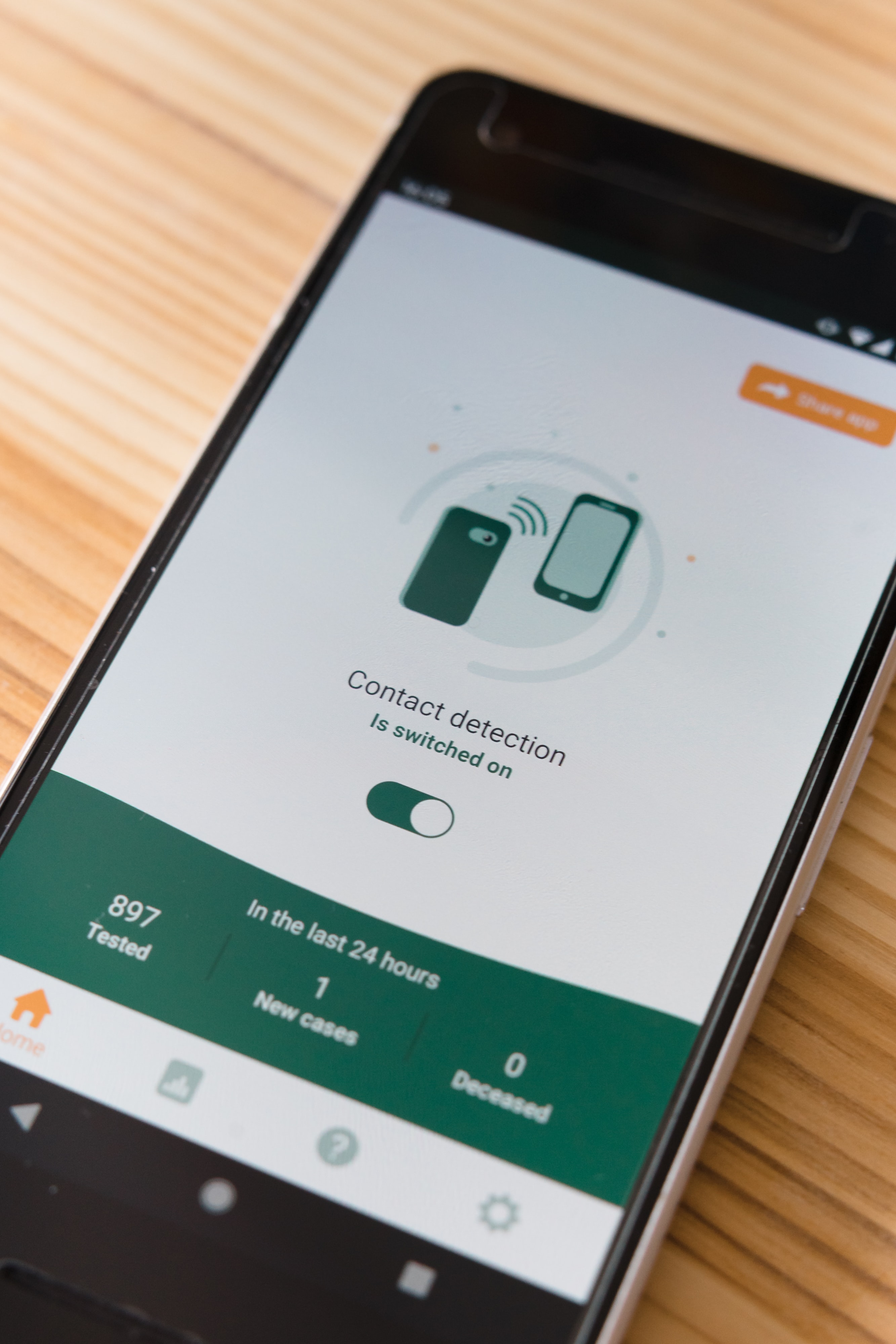

The rapid increase in dependency on AI to enforce social control during the present pandemic has also raised many privacy concerns. Arogya-Setu dangerous mix of health data and digital surveillance.technologies are a potent threat to basic human rights and can be used as tools of exploitation and oppression. In fact, if the use of AI continues to remain widely unregulated, the human rights of vulnerable groups will undoubtedly suffer.

Conclusion

With the continuing expansion of the AI reign, the tension between AI and human rights is increasingly manifesting itself as technology becomes central to our everyday lives and the functioning of society. As AI is perceived as an improvement of modern society, the lack of stringent Data Protection policies offers Tech companies a society ready to be digitally exploited. With little regulation or accountability, these companies freely intrude into the lives of citizens and increasingly infringe on human rights. From fostering discrimination to invasive surveillance practices, AI has proven to be a threat to equal protection, economic rights, and basic freedoms. To reverse these trends, proper legal standards ought to be implemented in our digitally transforming societies. Increased transparency in AI decision-making processes, better accountability for Tech giants, and the ability for civil society to challenge the implementation of new technologies in society are sorely needed. ‘AI literacy’ should also be encouraged through investment in public awareness and education initiatives, which would help communities to learn not only about the functions of AI, but also its impact on our day to day lives. Unless suitable measures are enforced to safeguard the interests of human society, the future of human rights in this era of technology remains uncertain.

The article has vividly brought out the conflict between technology ie AI and HR at a base level. However my take is that instead of villifying the adverse consequences of AI we as a global community should focus on integrating this key resource in the hands of the governments and the corporations towards betterment of human lives. Whenever there is a conflict between usage of AI and HR the rules of international behaviours as promulgated for internet and WWW should also be codified for AI as this will.ensure that no frankestiein monster is let loose on the human race. Technology should be for holistic betterment of mankind and not for domination over the resources be it human or natural resource

Totally agreeing with you Deepak. There is a need for AI regime to flourish but that should not be unfettered. We cannot read HR laws and AI laws in the shadows of mutual exclusivity. We have to adopt a culture where there is AI development but within the HR framework so that there can be sustainable development of the technological advanced society.

Excellent read, thank you for the insight.

Thankyou so much for your feedback

This is an excellent article. Poor nations must come up with a system to defend their rights of their citizens against the deployment of AI. AI experiments are done in rich nations by rich individuals to perpetuate to protect the interests of the rich against the poor. Those deploying IA must be heavily taxed by Governments.

Totally agreeing with the fact that you portrayed. AI has also prospered the evil of digital divide and surely we can see its side effect during the Corona pandemic