The General Data Protection Regulation (GDPR), which has attracted widespread attention and comment in recent weeks, comes into force on 25 May 2018. Sonia Livingstone, Professor of Social Psychology in LSE’s Department of Media and Communications, reflects on some of the challenges of the new rules as they relate to children, arguing that had children been consulted and their perspectives understood, the Regulation would be better designed to fulfil their rights than it is at present. [Header image credit: Y.C.V. Chow, CC BY-NC 2.0]

The General Data Protection Regulation (GDPR), which has attracted widespread attention and comment in recent weeks, comes into force on 25 May 2018. Sonia Livingstone, Professor of Social Psychology in LSE’s Department of Media and Communications, reflects on some of the challenges of the new rules as they relate to children, arguing that had children been consulted and their perspectives understood, the Regulation would be better designed to fulfil their rights than it is at present. [Header image credit: Y.C.V. Chow, CC BY-NC 2.0]

A series of high profile data breaches and hacks, some involving children’s personal data, some arising from insufficient protections built into the emerging generation of smart devices, has raised urgent questions about whether children’s privacy is sufficiently valued in regulatory regimes for personal data protection. Many stakeholders, including child rights organisations concerned with children’s right to privacy, as well as other rights to provision, participation and protection, have been asking: what should be done?

In Europe, the General Data Protection Regulation (GDPR) becomes law on 25 May 2018, after several years in the making. It has been designed as a concerted, holistic and unifying effort to regulate personal data in the digital age. At a time when many public, private and third sectors organisations have only recently ‘gone digital’ and when data has very rapidly become ‘the new currency,’ the scope of application of the GDPR is vast. The legislation obliges data controllers and processors to fulfil the rights of data subjects by:

- processing personal data lawfully, securely and fairly, in ways that are transparent to, and comprehensible by, data subjects;

- collecting data, and profiling individuals, in ways limited to specific, explicit and legitimate purposes, with special provisions for the treatment of “sensitive” data;

- facilitating individuals’ rights to access, rectify, erase and retrieve their personal data, among other related rights and under specified circumstances;

- meeting a host of governance requirements to ensure compliance, informed by the conduct of risk-related impact assessments.

These obligations are designed to benefit the public and organisations alike. They will surely, therefore, benefit children also. However, already a host of uncertainties regarding interpretation and implementation of the GDPR are becoming evident, with stakeholders responding in diverse and sometimes misguided ways as they seek to comply with (or belatedly to catch up with) the GDPR.

Until recently, talk of the GDPR was rather esoteric, largely confined to legal, regulatory and technical experts. But recent weeks have seen the public bombarded with demands to update social media privacy settings and respond to a flood of email requests to re-consent to marketing and mailing lists, all the while hearing in the mass media about scandals about election hacking (especially based on personal data illegally collected via Facebook by Cambridge Analytica) or fights over the so-called “digital age of consent,” as in Ireland. This has brought complex regulatory deliberations a degree of mass awareness, including among parents and children. We don’t yet really know how the public will respond – with increased “data literacy”? with more protective actions regarding their privacy? or with fatalism, even cynicism that once online, their data is no longer under their control?

The GDPR makes some specific requirements in respect of children’s data, for reasons set out in recital 38:

“Children merit specific protection with regard to their personal data, as they may be less aware of the risks, consequences and safeguards concerned and their rights in relation to the processing of personal data. Such specific protection should, in particular, apply to the use of personal data of children for the purposes of marketing or creating personality or user profiles and the collection of personal data with regard to children when using services offered directly to a child. The consent of the holder of parental responsibility should not be necessary in the context of preventive or counselling services offered directly to a child.”

While this statement has much merit, it is only a recital, guiding implementation of the GDPR but lacking the legal force of an article. In a recent LSE Media Policy Project roundtable, it became clear that there is considerable scope for interpretation, if not confusion, regarding the legal basis for processing (including, crucially, when processing should be based on consent), the definition of an information society service (ISS) and the meaning of the phrase ‘directly offered to a child’ in Article 8 (which specifies a so-called “digital age of consent” for children), the rules on profiling children, how parental consent is to be verified (for children younger than the age of consent), and when and how risk-based impact assessments should be conducted (including how they should attend to intended or actual child users). It is also unclear in practice just how children will be enabled to claim their rights or seek redress when their privacy is infringed.

We have been blogging about these challenges over recent months, and will continue to keep a close eye on how the GDPR will serve to protect – or at worse, confuse or undermine – children’s privacy and data protection. Already there are some surprises. WhatsApp, currently used by 24% of UK 12-15 year olds, announced it will restrict its services to those aged 16+, regardless of the fact that in many countries in Europe the digital age of consent is set at 13. Instagram is now asking its users if they are under or over 18 years old, perhaps because this is the age of majority in the United Nations Convention on the Rights of the Child (UNCRC)? Do such changes mean effective age verification will now be introduced, or will the GDPR become an unintended encouragement for children to lie about their age to gain access to beneficial services, as part of their right to participate? How will this protect them better? And what does this complexifying landscape mean for media literacy education, given that schools are often expected to overcome regulatory failures by teaching children how to engage with the internet critically?

As many in the child rights community have also noted, children’s voices and experiences have been signally lacking from these debates, largely ignored precisely by the states and European regulatory bodies that have officially promised to recognise their right to be heard. It seems clear to many of us that, had children been consulted and their perspectives understood, the GDPR would be better designed to fulfil their rights than it is at present, and a number of the unintended and negative consequences now unfolding could have been avoided.

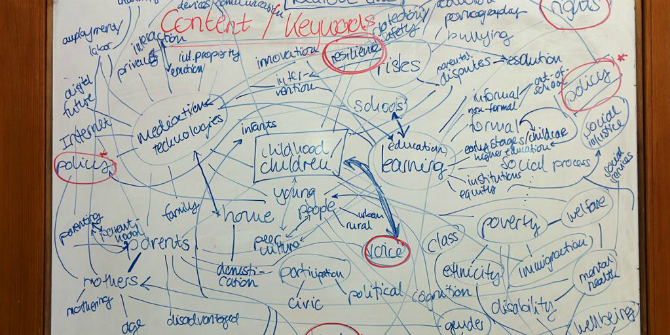

Still, we will see how things unfold in the coming months. We are beginning a new project to explore how children themselves understand how their personal data is used and how their data literacy develops through the years from 11-16 years old, by (1) conducting focus group research with children; (2) organising child deliberation panels for formulating child-inclusive policy and educational/awareness-raising recommendations; and (3) creating an online toolkit to support and promote children’s digital privacy skills and awareness. Watch this space!

Notes

This post was originally published on the LSE Media Policy Project blog and has been reposted with permission.

This post gives the views of the authors and does not represent the position of the LSE Parenting for a Digital Future blog, nor of the London School of Economics and Political Science.