It has been suggested in recent weeks that one source of variation in the polls of vote intention for the general election is the difference between traditional phone polls, and those polls that are conducted online. In this post, Matt Singh recasts the debate by focussing on the difference between newer and more established pollsters. He finds that polls from more established pollsters have tended to favour the Conservatives over recent months, while measuring somewhat lower shares for UKIP.

The debate around the relative merits of online and telephone polling has intensified in recent weeks, as the apparent closeness of next month’s election has brought the divergence into focus. With the two largest parties so close in the polls, a relatively small disparity between polling modes has created a situation where Labour leads among most online pollsters, while the Conservatives lead in most phone polls. This is contrary to the received wisdom that the Conservatives should do better in online surveys than phone polls, the logic being that the “interviewer effect” of the latter is more likely to produce a “shy” response.

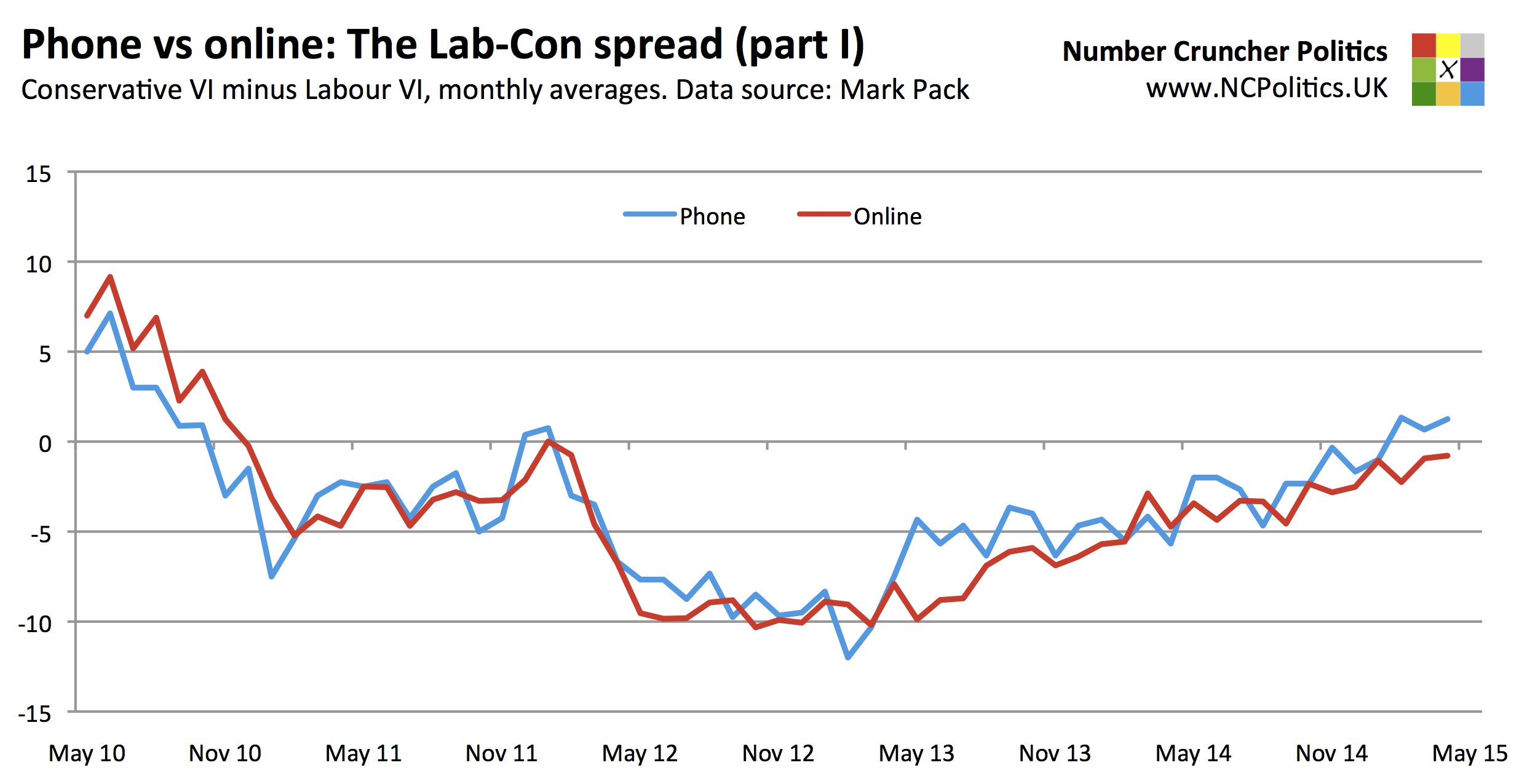

This divergence wasn’t present at the last election – Pickup et al. found no statistically significant difference in accuracy between online and phone polls in 2010. The following chart shows that the pattern has been reasonably consistent since early 2011. All pollsters have been equally weighted (with a monthly average taken for those that poll multiple times a month). The source for the historic polling in this piece is Mark Pack’s opinion poll database.

Turning to the reasons for divergence during the current parliament, one lay theory that can be probably be discarded immediately is that the demographics of landlines create a bias towards the Conservatives. While it may be true that older people are both more likely than the wider electorate to have and to answer landlines, and more likely to vote Conservative, modern polls are always demographically weighted, so there is no reason to think that this would be a factor.

Various alternative theories have been put forward (see, for example, this discussion on UK Polling Report). One is a variation on the interviewer effect – that some shy UKIP supporters are either telling phone pollsters they intend to vote Conservative, or are being reallocated back to the Conservatives via the “spiral of silence” adjustment – having voted Conservative in 2010 – and artificially inflating the Conservative vote share. This is plausible, but doesn’t seem to be supported by the data. The bias appears to have existed for about a year before the UKIP surge began (in spring 2012) and didn’t change much for a year or so thereafter. In that two-year period, UKIP support rose from around 4 percent to around 13 percent without any great effect on the phone-online spread. However the spread did react noticeably when the Conservatives began to regain ground relative to Labour in spring 2013.

It’s also notable, despite the limited data, that the by-election polls (all of which are conducted by phone) that have underestimated UKIP’s vote share have tended to be in more “Labour” areas (Barnsley, Wythenshawe & Sale East and Heywood & Middleton), whereas in contests with the Conservatives (Newark, Clacton and Rochester & Strood) the polls have relatively accurately measured the UKIP vote share, or even modestly overestimated it.

The other theory concerns the representativeness or otherwise of online panels. Rather than being random probability samples, online polls are statistical models based on non-random samples, which attempt to estimate for the population by adjusting for known biases. The problem arises where there are biases that are unknown (or difficult to quantify). If, for instance, members of an online panel happen to be more politically engaged than the average voter, or – more seriously – if party activists deliberately infiltrate a panel in an attempt to manipulate its results, then ceteris paribus a systematic bias arises. In a recent example, one online pollster, which shall remain unidentified, reported that 56 percent of its respondents intended to watch the recent BBC leaders’ debate, compared with actual viewing figures equivalent to slightly less than 10 percent of the electorate.

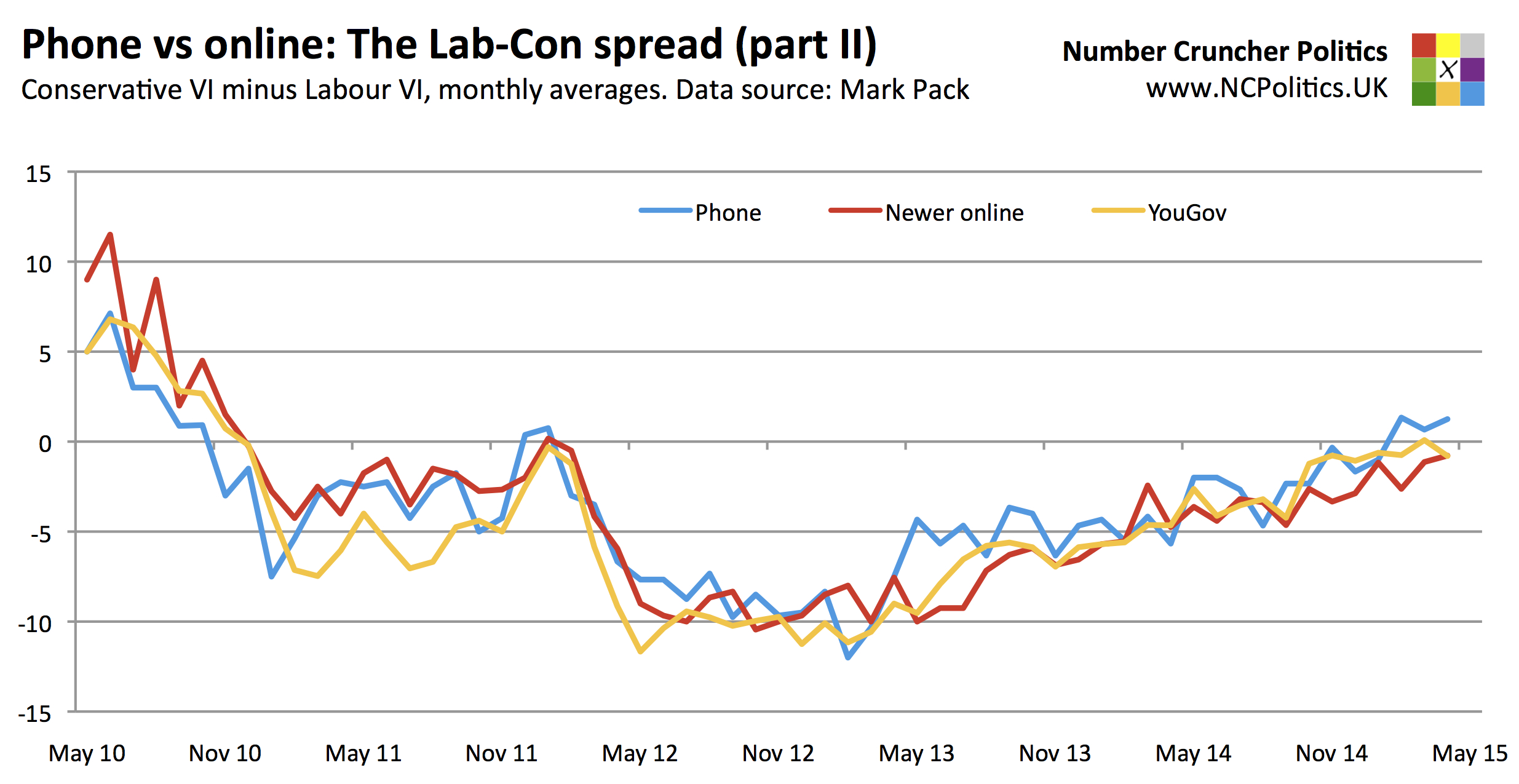

Pollsters can also be segmented by their longevity. The data does seem to show that the more established pollsters are showing different patterns to the newer ones. In the next chart, any pollster/mode combination that’s polled at least the last two general elections is classified as “established” [1] (which in practice means phone pollsters plus YouGov) and the others, including online polls by established pollsters, as “new” [2].

Splitting out YouGov from the newer online polls, it can be seen that the phone-online difference is, in part, really a new versus established pollster difference. The one established online pollster is in some cases more in line with phone polls than with other online polls, though in the chart of the Labour-Conservative spread, it seems to behave like a mixture of the two. But in a scenario where relatively few votes are switching directly between the two largest parties, the phone-online difference in the Labour-Conservative spread is essentially driven by the net of the spreads for each of the smaller parties.

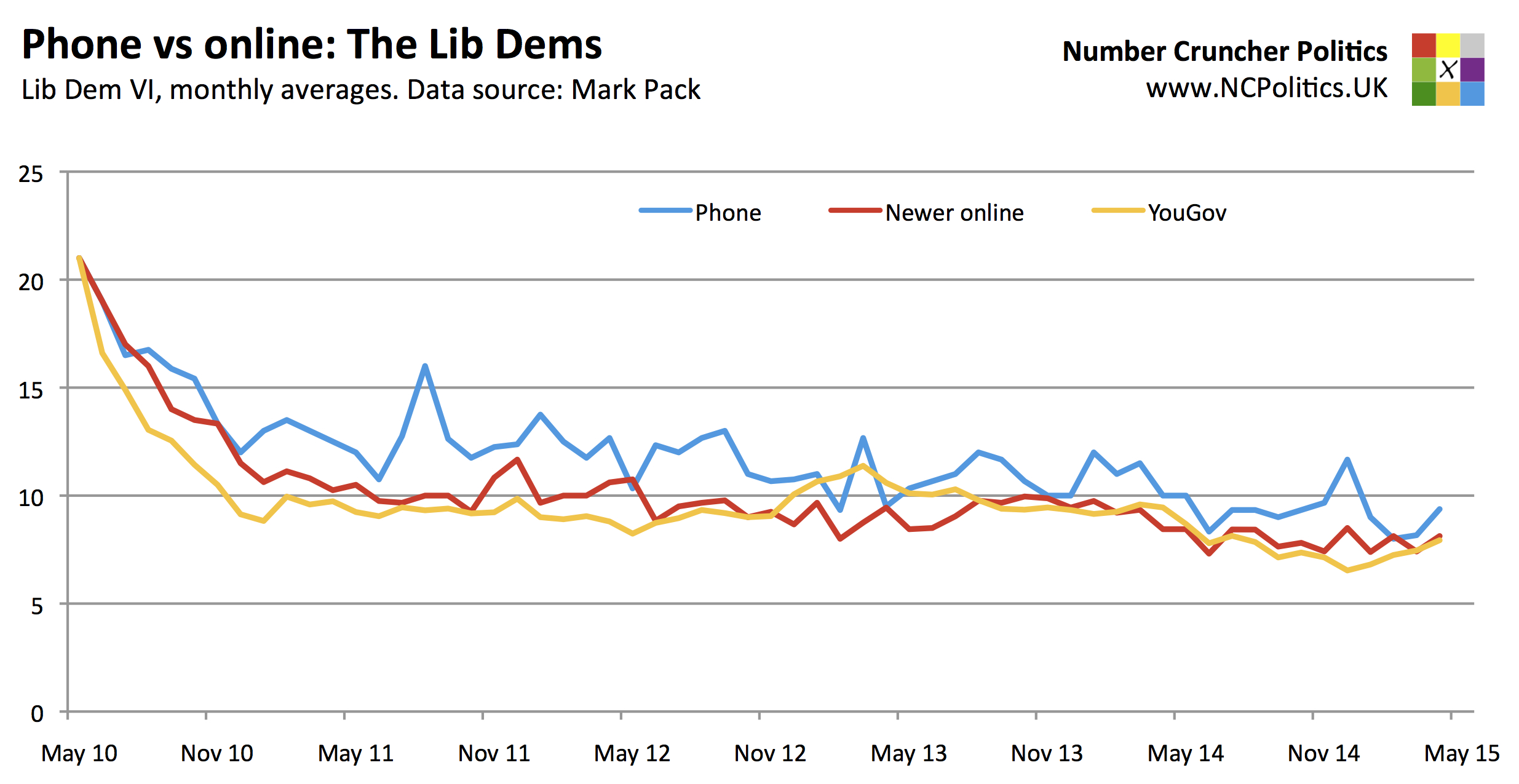

Starting with the Liberal Democrats, phone polls have consistently put the level of their support higher than both YouGov and the newer online polls. This is due to methodology – a disproportionate number of 2010 Lib Dem voters were undecided for much of this parliament. Phone polls generally make some allowance for these voters, whereas online polls tend to exclude them. As these “Don’t knows” have made up their minds, the gap in measured Lib Dem support between the two modes has narrowed considerably.

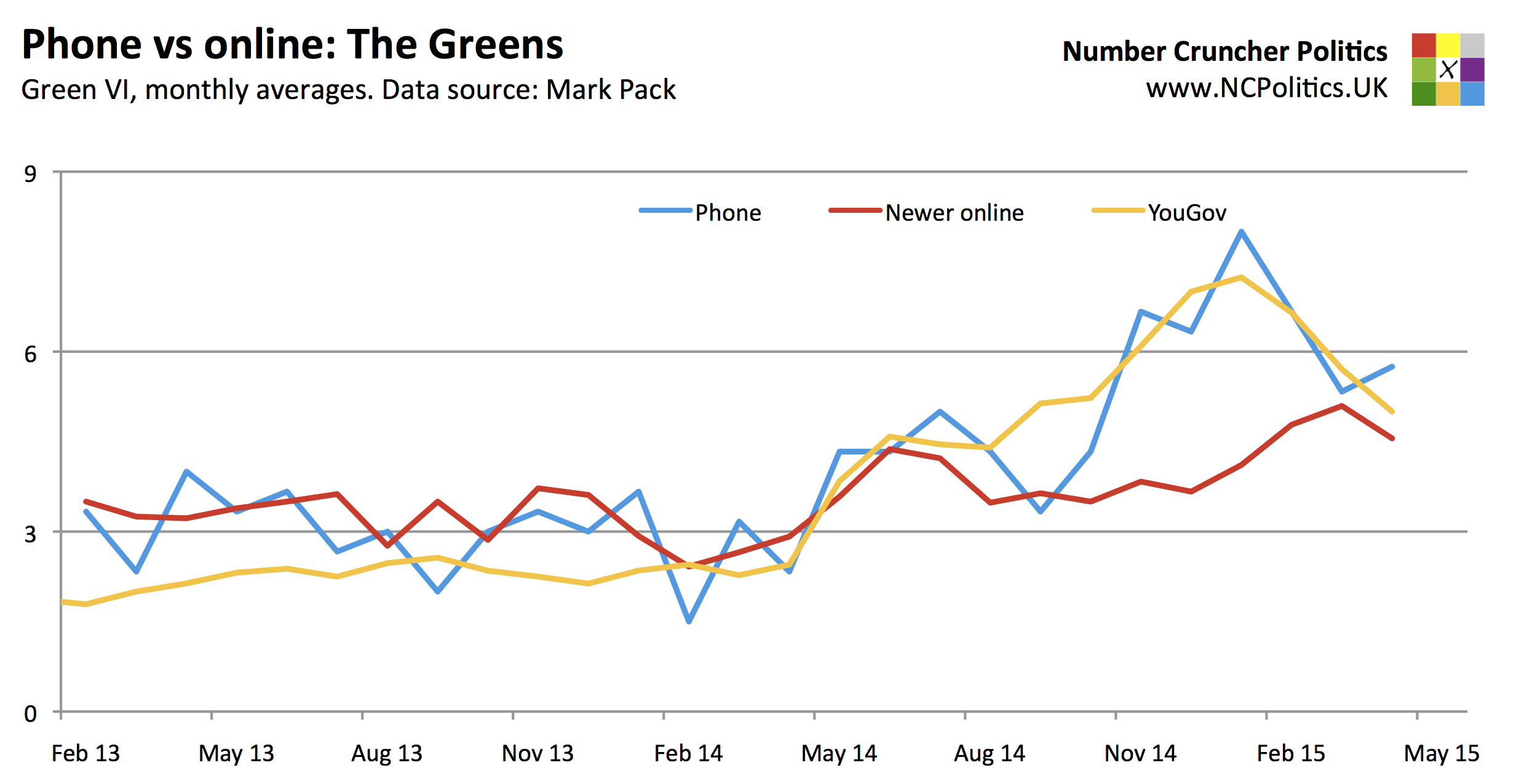

With the combined Green Parties however, the picture is very different. The Greens are perhaps more difficult to poll for than anyone else, because their support has been up to eight times what it was at the 2010 election, making past vote-weighting an extremely blunt tool. The Green surge that started in autumn 2014 was never really reflected in the newer online polls, while both YouGov and the phone polls showed Green support nearly doubling, while tracking one another very closely indeed.

Probably the most pronounced of all the differences have been seen in the level of UKIP support, with a fairly consistent 4-5 point spread between phone pollsters and the newer online polls (which, incidentally, was exactly the margin by which the latter overestimated UKIP’s vote share at the 2014 European Elections). YouGov has typically tracked the phone pollsters much more closely, although there are signs of a divergence when UKIP support is lower, including in recent months.

There has also been an ongoing debate over whether to include UKIP in the main prompt, and at the time of writing polling firms remain divided on the issue. However pollsters in both modes that have introduced UKIP prompting or have run parallel tests have found the impact of the change to be insignificant.

The panel composition is crucial too. Given the additional challenges that pollsters face in the current environment, achieving as representative a sample as possible is more important than ever. In terms of the reasons why panels might differ, it seems that YouGov’s panel is quite different. It is both very large, at around 400,000 UK panelists, and recruitment to it is under YouGov’s complete control. In common with some other online panels, much of the recruitment is via targeted advertising. This makes it less likely that the panel becomes contaminated by activists, and where they do enter the panel, its size dilutes their impact.

It’s worth remembering too that there are substantial differences between individual pollsters (house effects) in addition to these mode effects. Ipsos MORI is the last remaining phone pollster to use what were once called “unadjusted” figures (without political weighting or the spiral of silence adjustment), which explains its recent tendency to show Labour leads. By contrast, Opinium has tended to show the best results for the Conservatives from an online pollster in recent weeks, which again may be related to its methodology.

The obvious question is which mode provides the most accurate snapshot of public opinion. One shouldn’t simply assume that more established pollsters are invariably “right” and the others “wrong”. In polling, as in any industry, new entrants can bring fresh thinking and innovation. ICM was a relatively new player when it invented the spiral of silence adjustment. But to the extent that a pollster’s track record is relevant, the data does suggest that the more seasoned polls have looked better for the Conservatives and worse for Labour in recent months. Whether the polls converge over the next fortnight, or one set turns out to be substantially more accurate than the other, remains to be seen.

Note: Figures accurate as of April 22nd.

[1] ICM, Ipsos MORI, Populus (phone)/Lord Ashcroft, ComRes (phone) and YouGov

[2] Opinium, TNS-BMRB, Populus (online), ComRes (online), Survation and Panelbase

Matt Singh runs Number Cruncher Politics, a non-partisan psephology and polling blog. He tweets at @MattSingh_ and Number Cruncher Politics can be found at @NCPoliticsUK.

Matt Singh runs Number Cruncher Politics, a non-partisan psephology and polling blog. He tweets at @MattSingh_ and Number Cruncher Politics can be found at @NCPoliticsUK.

1 Comments