Criticism continues to mount against high impact factor journals with a new study suggesting a preference for publishing front-page, “sexy” science has been at the expense of methodological rigour. Dorothy Bishop confirms these findings in her assessment of a recent paper published on dyslexia and fears that if the primary goal of some journals is media coverage, science will suffer. More attention should be placed on whether a study has adopted an appropriate methodology.

Criticism continues to mount against high impact factor journals with a new study suggesting a preference for publishing front-page, “sexy” science has been at the expense of methodological rigour. Dorothy Bishop confirms these findings in her assessment of a recent paper published on dyslexia and fears that if the primary goal of some journals is media coverage, science will suffer. More attention should be placed on whether a study has adopted an appropriate methodology.

Here’s a paradox: Most scientists would give their eye teeth to get a paper in a high impact journal, such as Nature, Science, or Proceedings of the National Academy of Sciences. Yet these journals have had a bad press lately, with claims that the papers they publish are more likely to be retracted than papers in journals with more moderate impact factors. It’s been suggested that this is because the high impact journals treat newsworthiness as an important criterion for accepting a paper. Newsworthiness is high when a finding is both of general interest and surprising, but surprising findings have a nasty habit of being wrong.

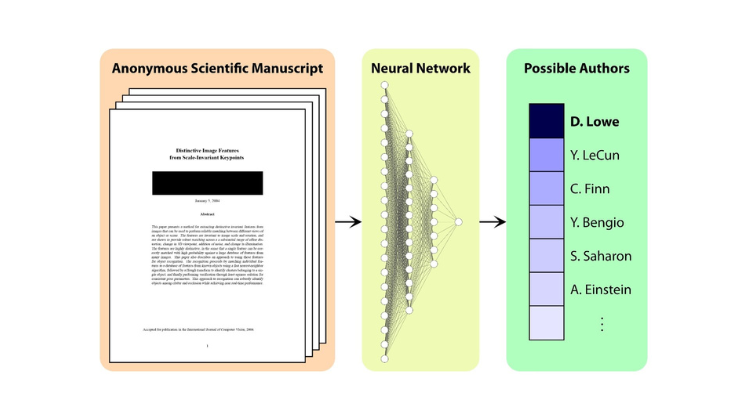

A new slant on this topic was provided recently by a paper by Tressoldi et al (2013), who compared the statistical standards of papers in high impact journals with those of three respectable but lower-impact journals. It’s often assumed that high impact journals have a very high rejection rate because they adopt particularly rigorous standards, but this appears not to be the case. Tressoldi et al focused specifically on whether papers reported effect sizes, confidence intervals, power analysis or model-fitting. Medical journals fared much better than the others, but Science and Nature did poorly on these criteria. Certainly my own experience squares with the conclusions of Tressoldi et al (2013), as I described in the course of discussion about an earlier blogpost.

Last week a paper appeared in Current Biology (impact factor = 9.65) with the confident title: “Action video games make dyslexic children read better.” It’s a classic example of a paper that is on the one hand highly newsworthy, but on the other, methodologically weak. I’m not usually a betting person, but I’d be prepared to put money on the main effect failing to replicate if the study were repeated with improved methodology. In saying this, I’m not suggesting that the authors are in any way dishonest. I have no doubt that they got the results they reported and that they genuinely believe they have discovered an important intervention for dyslexia. Furthermore, I’d be absolutely delighted to be proved wrong: There could be no better news for children with dyslexia than to find that they can overcome their difficulties by playing enjoyable computer games rather than slogging away with books. But there are good reasons to believe this is unlikely to be the case.

An interesting way to evaluate any study is to read just the Introduction and Methods, without looking at Results and Discussion. This allows you to judge whether the authors have identified an interesting question and adopted an appropriate methodology to evaluate it, without being swayed by the sexiness of the results. For the Current Biology paper, it’s not so easy to do this, because the Methods section has to be downloaded separately as Supplementary Material. (This in itself speaks volumes about the attitude of Current Biology editors to the papers they publish: Methods are seen as much less important than Results). On the basis of just Introduction and Methods, we can ask whether the paper would be publishable in a reputable journal regardless of the outcome of the study.

On the basis of that criterion, I would argue that the Current Biology paper is problematic, purely on the basis of sample size. There were 10 Italian children aged 7 to 13 years in each of two groups: one group played ‘action’ computer games and the other was a control group playing non-action games (all games from Wii’s Rayman Raving Rabbids – see here for examples). Children were trained for 9 sessions of 80 minutes per day over two weeks. Unfortunately, the study wasseriously underpowered. In plain language, with a sample this small, even if there is a big effect of intervention, it would be hard to detect it. Most interventions for dyslexia have small-to-moderate effects, i.e. they improve performance in the treated group by .2 to .5 standard deviations. With 10 children per group, the power is less than .2, i.e. there’s a less than one in five chance of detecting a true effect of this magnitude. In clinical trials, it is generally recommended that the sample size be set to achieve power of around .8. This is only possible with a total sample of 20 children if the true effect of intervention is enormous – i.e. around 1.2 SD, meaning there would be little overlap between the two groups’ reading scores after intervention. Before doing this study there would have been no reason to anticipate such a massive effect of this intervention, and so use of only 10 participants per group was inadequate. Indeed, in the context of clinical trials, such a study would be rejected by many ethics committees (IRBs) because it would be deemed unethical to recruit participants for a study which had such a small chance of detecting a true effect.

But, I hear you saying, this study did find a significant effect of intervention, despite being underpowered. So isn’t that all the more convincing? Sadly, the answer is no. As Christley (2010) has demonstrated, positive findings in underpowered studies are particularly likely to be false positives when they are surprising – i.e., when we have no good reason to suppose that there will be a true effect of intervention. This seems particularly pertinent in the case of the Current Biology study – if playing active computer games really does massively enhance children’s reading, we might have expected to see a dramatic improvement in reading levels in the general population in the years since such games became widely available.

The small sample size is not the only problem with the Current Biology study. There are other ways in which it departs from the usual methodological requirements of a clinical trial: it is not clear how the assignment of children to treatments was made or whether assessment was blind to treatment status, no data were provided on drop-outs, on some measures there were substantial differences in the variances of the two groups, no adjustment appears to have been made for the non-normality of some outcome measures, and a follow-up analysis was confined to six children in the intervention group. Finally, neither group showed significant improvement in reading accuracy, where scores remained 2 to 3 SD below the population mean (Tables S1 and S3): the group differences were seen only for measures of reading speed.

Will any damage be done? Probably not much – some false hopes may be raised, but the stakes are not nearly as high as they are for medical trials, where serious harm or even death can result from wrong results. There is concern, however, that quite apart from the implications for families of children with reading problems, there is another issue here, about the publication policies of high-impact journals. These journals wield immense power. It is not overstating the case to say that a person’s career may depend on having a publication in a journal like Current Biology (see this account – published, as it happens, in Current Biology!). But, as the dyslexia example illustrates, a home in a high-impact journal is no guarantee of methodological quality. Perhaps this should not surprise us: I looked at the published criteria for papers on the websites of Nature, Science, PNAS and Current Biology. None of them mentioned the need for strong methodology or replicability; all of them emphasised “importance” of the findings.

Methods are not a boring detail to be consigned to a supplement: they are crucial in evaluating research. My fear is that the primary goal of some journals is media coverage, and consequently science is being reduced to journalism, and is suffering as a consequence.

This blog was originally published on BishopBlog and can be found here along with further discussion of interest in the comments section. If you would like to comment on this article, please do so on the original post.

Note: This article gives the views of the author, and not the position of the Impact of Social Science blog, nor of the London School of Economics.

About the author

Dorothy Bishop is Professor of Developmental Neuropsychology and a Wellcome Principal Research Fellow at the Department of Experimental Psychology in Oxford and Adjunct Professor at The University of Western Australia, Perth. The primary aim of her research is to increase understanding of why some children have specific language impairment (SLI). Dorothy blogs at BishopBlog and is on Twitter @deevybee.

2 Comments