Last week there were a number of news reports about the harmful effects of social media on the mental health of teens and young people. Responding to this, we are publishing two posts this week that address the topic. First, this post by Rose Bray details the findings of the NSPCC and O2’s Net Aware research.

Investigating the nature of, and the amount of inappropriate content that young people encounter online, Net Aware researched young people and parents to learn their views about apps’ safety features and risks. With children and parents expressing a desire for industry to improve children’s safety online, Rose outlines a set of standards to implement in order to achieve this. Rose Bray is a Project Manager in the NSPCC’s Child Safety Online Team. Most recently, she has been project managing Net Aware, the NSPCC and O2’s guide for parents to the most popular apps, sites and games that young people use. [Header image credit: C. Rogers_CC BY 2.0]

Investigating the nature of, and the amount of inappropriate content that young people encounter online, Net Aware researched young people and parents to learn their views about apps’ safety features and risks. With children and parents expressing a desire for industry to improve children’s safety online, Rose outlines a set of standards to implement in order to achieve this. Rose Bray is a Project Manager in the NSPCC’s Child Safety Online Team. Most recently, she has been project managing Net Aware, the NSPCC and O2’s guide for parents to the most popular apps, sites and games that young people use. [Header image credit: C. Rogers_CC BY 2.0]

The NSPCC and O2’s latest Net Aware research indicates that young people are coming across worrying levels of inappropriate content in the online spaces they are using, and they are calling on industry to do more to protect them. This research comes at a time of increasing media attention on the impact of social media on young people’s mental health. The NSPCC thinks it’s time that young people’s voices and experiences are heard. We believe that a coherent set of minimum standards, enforced by an independent regulatory body, are essential to ensure that young people are more consistently and robustly safeguarded online.

Net Aware

Net Aware is a guide, created by the NSPCC and O2, to 39 of the most popular sites, apps and games that young people use. The 2017 Net Aware site has been developed in consultation with 1,696 young people aged between 11 and 18 from across the UK. We also asked 674 parents to share their thoughts about the different sites’ and apps’ safety features, sign up processes and risks. Using these reviews can help parents to decide if a site is right for their child, if it’s age appropriate and the risks children might encounter online, so they can make decisions for their family.

What we found

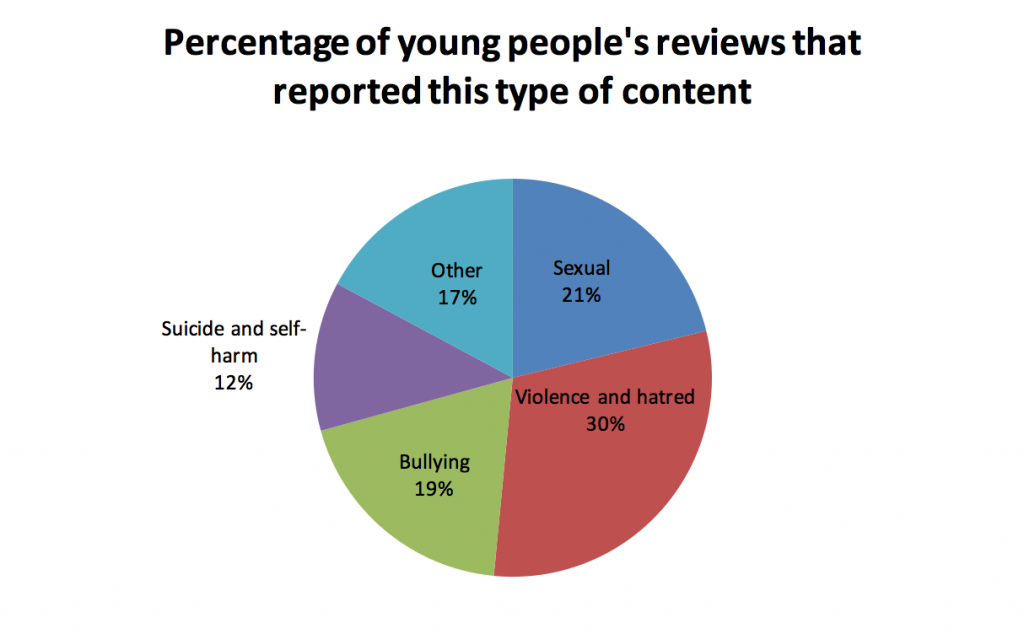

Far too many children and young people who we consulted were coming across inappropriate content on the sites, apps and games that they are using. 1 in 3 reported seeing violence and hatred on the sites they reviewed, and 1 in 5 reported sexual content and bullying. One girl, 13, reviewing Instagram, said “it is risky because you can come across images that might upset or worry you and it is possible you might get bullied or called names by people who you thought were your friends.”

The graph below shows the percentage of young people’s reviews which reported seeing each type of inappropriate content:

While the levels of inappropriate content in general are concerning, the prevalence of violent and hateful content is particularly noteworthy. The content that young people referred to within this category spanned a range of themes including encouraging violence and showing racism, homophobia, sexism and animal abuse.

The types of violent and hateful content that young people reported varied depending on the type of site they were reviewing. For example, young people reviewing games typically referred to the scenes within the game or the behaviour of other players. For example, one boy, 13, reviewing Call of Duty: Black Ops Zombies said, “it contains blood and gruesome images that could cause nightmares.” Another boy, 13, reviewing Minecraft: Pocket Edition said that the things he dislikes about the game are “people destroying my things or stealing my swords or stuff.”

In contrast, the violence and hatred reported on content sharing sites often related to user generated content which young people were coming across. For example, one girl, 13, reviewing Facebook said, “I don’t like how they put up disturbing videos about people fighting, animals being hurt, racism, sexism and stuff like this.”

While the risks in young people’s online experiences are clear, so too are the reasons that they continue to explore online spaces. Young people told us that the main things they enjoy about the sites they use are:

- Having fun and being entertained

- Communicating with friends

- Self expression and creativity

One boy, 18, reviewing IMVU, said “I like getting to be myself. Picking how I look, and how I present myself to people. I enjoy talking to people that don’t know me.”

Evidently, despite the risks, the internet remains a place that offers children great potential for play, learning and exploration.

Things need to change

Young people must be protected from inappropriate content so they are free to enjoy the amazing opportunities that the internet can offer them. The young people we spoke to in our Net Aware consultation are calling on industry to make this happen; four out of five children told us that they feel social media companies aren’t doing enough to protect them from content such as pornography, self-harm, bullying and hatred.

We feel that social networking sites must take their child safeguarding duties seriously. Children and young people should be protected in the online space in the same way that they are protected in the offline world.

Important steps have been taken towards keeping children safe online, but our Net Aware survey has also shown us that children and parents want industry to do more. We believe that industry must also accept responsibility and take-action to improve children’s safety online. Self-regulation has clearly failed to work and the time has come for regulation. Industry must be held to a set of minimum standards that are overseen and enforced by an independent regulatory body. These standards should cover the following 3 areas:

- Companies should offer accounts that are specifically designed for under 18s; these would include features such as strong privacy settings, robust community standards and tagging of adult content.

- Industry must work proactively to prevent exposure to online abuse through searching for inappropriate content and introduce technological mechanisms that flag accounts involved in suspicious activity.

- If a child is exposed to online abuse or inappropriate content, there should be clear reporting mechanisms, information offered in clear and accessible language and signposting to support services.

Children and young people are experts in their own experiences online and they have clearly told us that industry must do more to help, but for this to work any solutions devised to keep children safe must be done so in partnership with young people themselves. Young people must be given the opportunity to be an integral part of developing ideas and be at the heart of decision making, ensuring that their voices and needs are heard, valued and respected.

Remember to look out for our next post addressing mental health and young people’s experiences online in connection with the Blue Whale Game.

This post gives the views of the authors and does not represent the position of the LSE Parenting for a Digital Future blog, nor of the London School of Economics and Political Science.